News Posts In Category

The big-talk, no-action Congress

In search for civility online, is the Golden Rule the answer?

The Bother of Biological Bodies

When I came to Earth, I of necessity adopted a human form — in order to be less conspicuous. Little did I know what a mess caring for the human body would be.

The worst part about the tasks required to keep the body from deteriorating too much is that they take so much time. All of these mostly unpleasant activities could — if I let them — gobble up 1-2 hours of my day. Unfortunately, what I've found is that putting off some of these tasks merely means spending more than 1-2 hours when the deterioration has become more annoying than the tasks themselves.

So, what unpleasant and annoying tasks does the biological human body require? Here are the worst, from my perspective (in no particular order):

- Emptying bowels. On Mars, our bodies do this quickly and cleanly, merely be ejecting a small, shiny egglike object when necessary.

- Trimming nails. What a bother! And so prone to error, hangnails being the worst.

- Brushing teeth. Seriously, there's no reason why human teeth should require so much care and expense to maintain. When was the last time you saw a cat brush its teeth?

- Washing hands. Not unpleasant so much as annoying. Yet without frequent washings during the day, the body is vulnerable to attack by malicious microbes — and what a disaster that can be!

- Cutting hair. Some people, I've noticed, actually enjoy this activity. But to me it's merely an annoying time-waster.

- Trimming facial hair. Same as hair-cutting, except I don't think most men actually enjoy the activity.

- Treating fungus. It seems that once you are invaded by fungus, it never goes away. It flares and fades and requires outrageous expenditures on a variety of products, none of which offers a permanent cure.

- Minimizing body odor. Again, some humans enjoy showering, bathing, and the rest ... but to a Martian, this problem is best controlled by other, less time-consuming, means.

Now, granted, there are at least two biological functions that I find enjoyable — even though they both take a good deal of time: Eating, and orgasm. The former is a necessary part of maintaining the biological body, but the latter is not. It's merely a fun option... and one of the best things about living in the human body. ![]()

The Sociology of Tornadoes

- Paranoid?

- Envious?

- God-Fearing?

- Intolerant?

- Republican?

In recent days, I've been barraged by friends back on Mars inquiring about what psychological effects the recent spate of tornadoes in the South and Midwest United States must have on the humans there. Their interest got me to thinking, and I suddenly had an insight, which I'm sure has brightened the intellectual glow of many beings (both Martian and human) before me.

The insight encompasses the sociological effects of hurricanes as well, since the two devastating natural phenomena share some common traits... the most obvious being those furiously spinning wind and clouds.

My Martian theory also explains why tornadoes and hurricanes affect humans in ways that volcanoes, tsunamies, and earthquakes do not.

For brevity in the following paragraphs, I'm using the term "Recurring Events of Mass Destruction" (REMD) to refer to tornadoes and hurricanes, and the term "Unpredictable Events of Mass Destruction" (UEMD) to refer to volanoes, tsunamies, and earthquakes.

REMD. The distinguishing characteristics of REMD include:

- They happen every year.

- Though their frequency and severity vary from year to year, their geographical incidence is constant and encompasses huge areas of the country.

- Though you know they'll occur every year, you have no idea where exactly they'll strike.

- When they do strike, they invariably cause severe damage at the strike site.

UEMD. The distinguishing characters of these include:

- They happen whenever. Completely unpredictable.

- Most of the time, their effect on human life and property is minimal. Catastrophic events do occur, but their incidence is rare compared with REMD.

- The geographic range is much more limited and static than for REMD. Only a few States are affected by UEMD.

These widely differing attributes lead me to theorize the following psychological effects on humans who live in areas prone to REMD.

| Dread | The certainty of mass destruction lowers an unconscious web of dread on REMD people. Ongoing dread bends the psyche toward irrational fear of the unknown, as well as paranoia. |

| Envy and Schadenfreude | Although humans like to think otherwise, those who live in an area where one's town can be devastated while a town close by goes unscathed invariably feel envy when this occurs. Likewise, those in the spared town will feel the opposite—schadenfreude. Although this affects victims of UEMD too, the sociological effect is lessened by the lower incidence and narrower geographical confines of UEMD. Over time, envy and schadenfreude become ingrained in a community's collective psyche. |

| The widespread and continuous feelings of envy and schadenfreude can heighten suspicion of "outsiders" and lead to an intolerant, parochial view of the world. It can also make humans more stingy towards those beyond their immediate community. These people are more likely to adopt the philosophy, "Every Man For Himself," and they become incapable of seeing "beyond their own back yard" in terms of understanding people different from themselves. | |

| Religiousness | Throughout human history, people have been driven to religion to explain natural phenomena—both good and bad. If REMD are caused by God, then perhaps fear, dedication and prayer to God will help. Religion in REMD areas would therefore be expected to emphasize the fear factor of God, as well as a greater awareness of God's opposite—Satan, Evil. |

People living with UEMD are also affected by these feelings, but to a much lesser degree. Because of the infrequency and lack of predictable recurrence of UEMD, the emotions do not grip entire communities or regions. The primary psychological effect on humans of UEMD is a sort of Stoic Fatalism, which can be summed up in the philosophy "What Will Be Will Be." Stoicism tends to make humans more tolerant of others and more broad-minded about ideas.

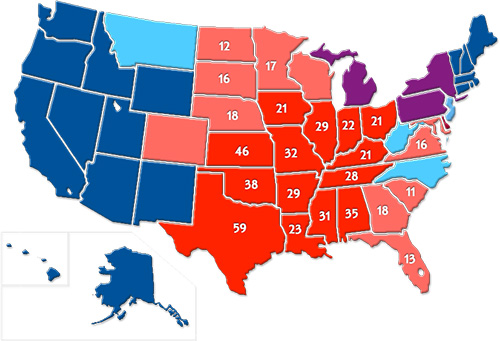

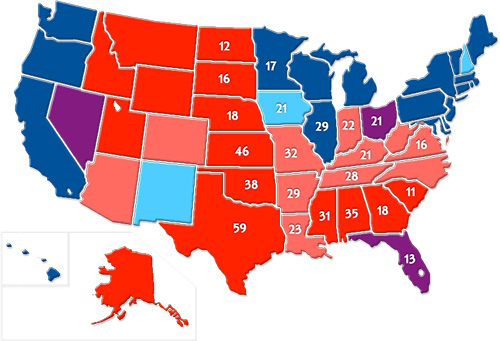

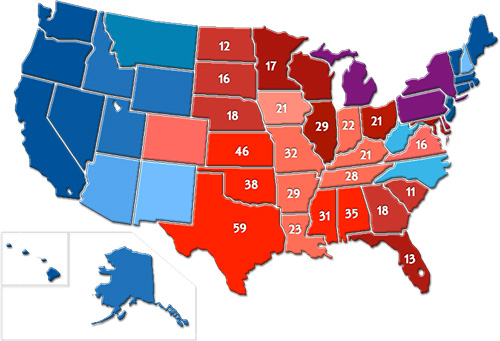

Given these characteristics, it's now worth mapping their effects geographically, in order to see how the incidence of tornadoes correlates with the incidence of humans with those characteristics. Since the psychological makeup of Republican (conservative) humans aligns pretty well with the attitudes of those prone to REMD, I am using voting patterns as a proxy for sociological differences between areas affected by REMD and UEMD. The maps below adopt the paradigm of "blue" and "red" States to view the correlation.

Notes: The data in Map 1 are derived from statistics published by NOAA's Storm Prediction Center, and cover the 5 years from 2000-2004 . To improve focus, it doesn't show incidence numbers lower than 10. The number of States of a particular color on Map 1 is the same as the corresponding number Map 2 (Source: Wikipedia Commons).

This is the color legend for Map 2:

- The Republican candidate carried the State in the last four presidential elections (1996, 2000, 2004, 2008)

- The Republican candidate carried the State in three of the four most recent elections.

- The Republican candidate and the Democratic candidate each carried the State in two of the four most recent elections.

- The Democratic candidate carried the state in three of the four most recent elections.

- The Democratic candidate carried the state in all four most recent elections.

Although not a perfect predictor, tornado frequency correlates pretty closely with election results: The States that lie in the tornado "alleys" of the South and Midwest are almost all populated by Republican-leaning voters.

I should stress that this theory does not postulate that tornadoes are the sole predictor of a State's sociological makeup. For example, the characteristics this theory predicts for humans in REMD areas are also found in small-town and rural areas, which are heavily populated by parochial and intolerant humans. Red States that do not correlate with the tornado data but whose population predominantly lives in small towns and rural areas include Montana, Wyoming, Idaho, and Alaska.

Among the blue States, the biggest outlier is Illinois, which maps as solid red in tornado frequency but solid blue in voting pattern. Though this result seems surprising—as well as contradictory to my theory—it can be explained by noting that the incidence of tornadoes is primarily in the southern part of the State, which is also heavily Republican and has long considered itself part of the South.

It's worth noting that many of the red and pink States on the third map are also those that suffer the most REMD by hurricanes—South Carolina, Georgia, Florida, Mississippi, Alabama, Louisiana, and Texas. Adding hurricanes to the tornado data above would undoubtedly also turn North Carolina red.

And this, my fellow Martians, is my explanation of how tornadoes affect the sociology of the human populations they afflict.

Schadenfreude.

Pleasure derived by someone from another person's misfortune.

Envy.

A feeling of discontented or resentful longing aroused by someone else's possessions, qualities, or luck.

Dread.

Anticipate with great apprehension or fear.

Intolerance.

Not tolerant of others' views, beliefs, or behavior that differ from one's own.

Parochialism.

Having a limited or narrow outlook or scope.

Religiousness.

Believing in and worshiping a superhuman controlling power or powers, esp. a personal God or gods.

Stoicism.

The endurance of pain or hardship without a display of feelings and without complaint.

Fatalism.

A submissive attitude to events, resulting from the belief that all events are predetermined and therefore inevitable.

Broad-minded.

Tolerant or liberal in one's views and reactions; not easily offended.

Republican.

A person who is averse to change and holds to traditional values and attitudes, typically in relation to politics.

God.

The creator and ruler of the universe and source of all moral authority; a superhuman being or spirit worshiped as having power over nature or human fortunes.

Paranoia.

Suspicion and mistrust of people or their actions without evidence or justification.

The “Bloated” Federal Bureaucracy:

A Lie That’s Either Malicious Ignorance Or Deliberate Malice

One of the truly bewildering traits of human beings is their ability—and even carefree willingness—to ignore facts that conflict with their current worldview. I touched on this topic in an earlier article, and find it manifested in numerous ways in this most viciously anti-rational political climate.

This article looks at data for a timely topic that's a favorite target for fact distortion: Has the U.S. Federal Government workforce grown too large, or not?

The "Tea Party" politicians, in particular, appear to be masters at the art of selling people willful ignorance, perhaps partly because they themselves drink from that cup religiously. Among the false ideas they consider common knowledge is the idea that the Federal workforce needs to be cut—presumably because it, like the Government as a whole, has grown too big. While they're at it, they'd also like to make sure Federal employees don't have a benefits package better than members of their own congregation do.

Recently, a Republican from Texas, Rep. Kevin Brady, submitted a legislative proposal to cut the Federal workforce by 10 percent. According to a Washington Post article, Brady's reasoning goes like this:

There's not a business in America that's survived this recession without right-sizing its workforce, without having to become more productive with fewer workers. The federal government can't be the exception. We're going to have to find a way to serve our constituents and our taxpayers better and quicker and more accurately with fewer workers. I'm convinced we can do it and we don't have a choice.

Including its overall premise, Brady's short statement includes several fallacies, and on Mars we find it alarming to realize that this guy is chairman of the Joint Economic Committee and a senior member of the House Ways and Means Committee. Where I come from, those are pretty big britches! When someone with authority over such enormously important Government functions gets his facts wrong, one has to wonder whether he is deliberately lying for political reasons, or whether he's maliciously failing to determine the facts—instead shaping them to fit his policy goals.

Joint Economic Committee

The Joint Economic Committee is one of four standing joint committees of the U.S. Congress. The committee was established as a part of the Employment Act of 1946, which deemed the committee responsible for reporting the current economic condition of the United States and for making suggestions for improvement to the economy.

On Mars, such behavior is almost unheard of. When I first revealed it, my fellow Martians had trouble believing that sentient beings could behave this way. And even if someone were to deliberately distort reality, surely Earth's legal systems would be constructed to punish the act.

Apparently, however, this behavior is not only tolerated, it's rewarded by the mere awareness that it's tolerated. After all, if a lie—or deliberate ignorance—by someone in authority isn't challenged, it clearly achieves its purpose. And achieving one's purpose obviously counts as a success. (On Mars, we believe that this is one of the perverse lessons Americans learned from President Richard Nixon's downfall: If you're going to lie, cheat, embezzle, or otherwise commit illegal acts, be sure you aren't caught doing so.)

So, what fallacies does Mr. Brady disseminate in his statement? Here are two obvious ones:

- "There's not a business in America that's survived this recession without right-sizing its workforce." How can this be true? Clearly, as has always been the case, the economic downturn produces not only losers, but winners as well. Yes, the losers will have had to lay off workers, hence the rise in unemployment. But companies in growth sectors will not have done so, and they may even have continued to expand. In this downturn, for example, employment in the oil mining industry increased from 143,000 to 159,000 from 2007 to 2009. A better example is the computer services sector, where employers added 400,000 jobs.

- "The federal government can't be the exception." Someone like Brady who is in charge of National economic policy undoubtedly understands that reducing employment in the Federal sector is never a good thing during a period of slow economic growth. Even economists who aren't sold onKeynesian economics realize that the Federal Government should remain a stable economic player during times like this. Stating otherwise must be a deliberate deception.

Keynesian Economics.

A macroeconomic theory based on the ideas of 20th century British economist John Maynard Keynes. This theory argues that private sector investment decisions periodically lead to inefficiencies that cause economic output to fall and unemployment to rise. It therefore advocates active policy responses by the public sector, including an expansion of the money supply by the central bank and increased spending by the government, in order to stabilize output over this business cycle.

That leaves the notion that the U.S. Government must "right-size" its workforce in order to "become more productive with fewer workers." First of all, what does "right-sizing" a workforce mean? If you read Wikipedia's article on the subject, you come away believing that "right-sizing" is merely a euphemism for "layoffs" or "downsizing."

Some dictionaries, on the other hand, suggest there's a nuance to the term that differentiates it from "layoffs." Webster's, for example, defines the term as follows:

To reduce (as a workforce) to an optimal size

"Right-sizing" (or "rightsizing") is a term first uttered on Earth in 1989, when it was really just jargon to justify the downsizing that became de rigeur during the waning years of the first Bush administration. One of the main reasons companies downsize is that their workforce has bulged after a major merger with or acquisition of another company. And as you may recall, starting in the 1980s corporations did a heckuva lot of merging and acquiring. For awhile, even "rollups" where all the rage on Wall Street.

Rollup.

A Rollup (also "Roll-up" or "Roll up") is a technique used by investors (commonly private equity firms) where multiple small companies in the same market are acquired and merged. The principal aim of a rollup is to reduce costs through economies of scale.

After a merger or major acquisition, it's pretty standard to eliminate inherited workers who do redundant tasks, or those who have a record of poor performance. Companies who downsize for any other reason do so because they're performing poorly, as measured by revenue and profits. In this case, companies downsize to reduce their production costs and make their products or services more competitive.

So, there are two big problems with even suggesting that the Federal Government engage in "right-sizing:"

- Governments are nonprofit institutions, and therefore notions such as competitiveness, profits, and product pricing are meaningless.

- Governments don't merge with or acquire other governments. Well, unless you're talking about conquests, which surely is a special case. Occasionally, governments do split up... for example, when a U.S. State secedes from the Union, or when a country declares its independence from another. In this latter case, of course, the split governments will find the need to "upsize" their workforce rather than downsizing them.

Ah, but what if you believe, as lawmakers such as Brady do, that the cost of the Federal workforce is a major reason why the Federal deficit is ballooning? Well, then I suppose the suggestion does make sense.

As it turns out—and here I'm finally getting to the crux of my argument—the Federal workforce has not been a contributor to the growth in Federal spending. If you're picking up an axe to cut the budget, hacking at the workforce is not only missing the target, but it will actually increase costs in the long run.

As it turns out—and here I'm finally getting to the crux of my argument—the Federal workforce has not been a contributor to the growth in Federal spending. If you're picking up an axe to cut the budget, hacking at the workforce is not only missing the target, but it will actually increase costs in the long run.

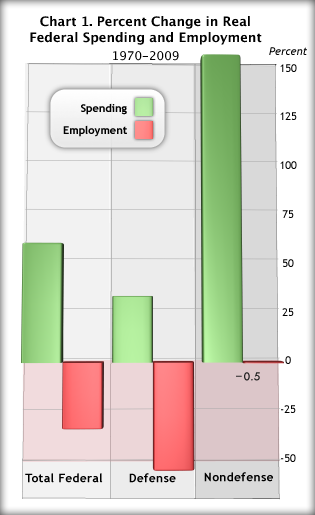

What evidence do I have to support such assertions? Consider the following facts for the 40-year period from 1970 to 2009, as illustrated in the accompanying charts:

- Real (adjusted for inflation) Federal consumption spending increased 56 percent, while total Federal employment fell about 30 percent. Most of the reduction in Federal employment came in the defense sector, but the number of nondefense employees stayed basically flat during this 40-year period while nondefense spending shot up 150% (Chart 1). (Note: The measure of spending shown in chart 1 includes only "current expenditures," which basically counts spending required "to keep the trains running"—that is, to carry out basic agency missions.)

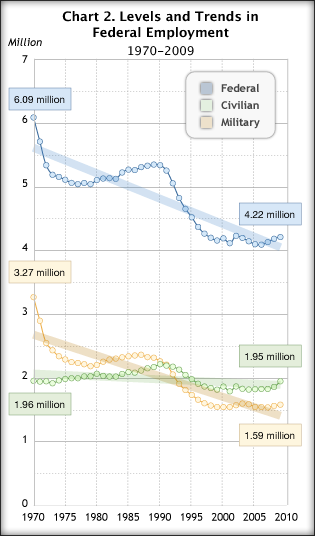

From 1970 to 2009, total Federal employment shrank from 6.1 million to 4.2 million—again, mostly in defense. The nondefense Federal workforce was 1.96 million in 1970, and 1.95 million in 2009 (Chart 2).

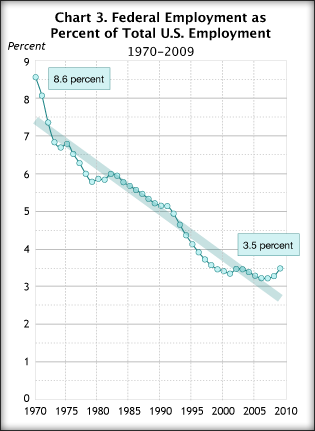

From 1970 to 2009, total Federal employment shrank from 6.1 million to 4.2 million—again, mostly in defense. The nondefense Federal workforce was 1.96 million in 1970, and 1.95 million in 2009 (Chart 2). During these 40 years, Federal employment as a percentage of total U.S. employment dropped from 8.6 percent to 3.5 percent (Chart 3).

During these 40 years, Federal employment as a percentage of total U.S. employment dropped from 8.6 percent to 3.5 percent (Chart 3).

These facts make it obvious that the Federal Government has been engaging in "right-sizing" for a very long time. How could Federal employees not be a great deal more efficient and productive today if their numbers haven't changed in the last 40 years, while their workload and output have doubled?

Despite continuous calls for less Federal "intrusion" into taxpayers' lives, taxpayers have simultaneously been demanding and expecting more and more of their National Government. As anyone who has been even marginally observant knows, Federal responsibilities have expanded greatly since 1970. Among its new and expanded assignments are:

- Occupational Safety and Health. The Occupational Safety and Health Administration was created in 1970 to "ensure that employers provide employees with an environment free from recognized hazards, such as exposure to toxic chemicals, excessive noise levels, mechanical dangers, heat or cold stress, or unsanitary conditions."

- Environmental Protection. The Environmental Protection Agency was also created in 1970 and charged with "protecting human health and the environment, by writing and enforcing regulations based on laws passed by Congress."

- National Security. The agencies responsible for ensuring the safety of U.S. citizens have increased employment substantially during this period, especially since the September 11, 2001, attacks by radical Islamic terrorists. The attacks resulted in a reorganization of security functions from various agencies into a new agency, the Office of Homeland Security. The number of Federal security personnel at U.S. airports has also increased, of course.

- Natural Resource Management. In 1973, Congress passed the Endangered Species Act, which requires Federal agencies to ensure that their activities "do not jeopardize the existence of any endangered or threatened species of plant or animal or result in the destruction or deterioration of critical habitat of such species."

- National Park System. Numerous Acts and Executive Orders have expanded the responsibilities of the National Park Service since 1970, including the General Authorities Act of 1970, the National Parks and Recreation Act of 1978, and the Alaska National Interest Lands Conservation Act of 1980.

- Drug Abuse. The Comprehensive Drug Abuse Prevention and Control Act of 1970 expanded and optimized the Federal Government's ability to control use of illegal drugs. Among other components, the legislation included the Controlled Substances Act, which established drug "schedules," into which various substances would be classified and for which misuse penalties would be defined.

- Many other functions, including Immigration Control (yes, we have been spending more money and hired more people for this), Education, Technology Infrastructure, and Information Dissemination.

Regarding Information Dissemination, consider the huge cost and workload involved in building all the great Federal websites we now have—including the many channels to obtaining customized information from Federal databases never before available.

For example, the charts and data shown in this article come from the Bureau of Economic Analysis (BEA), the Commerce Department agency responsible for collecting and analyzing statistics on the U.S. economy. BEA is the organization that produces estimates of Gross Domestic Product, personal income, and much more. Their data is now available through an easy-to-use, customizable web interface that generates data in a variety of formats, including tab-delimited, which can be imported into spreadsheet software.

Yes, the Government does much less printing now than it used to, but as one with first-hand knowledge of Federal publishing, let me assure you it costs much more now to publish on the web than printing ever did. For one thing, many agencies were encouraged to—and did—charge fees for printed publications. Obviously, they collect nothing from use of their websites. For another, nearly all Federal printed documents were required by law to use only black ink, or black and one other color. A tiny fraction used the four-color process that's standard for commercial printing.

However, Federal web publishing has been under no such contraints, and so agencies have spent as freely as they thought necessary to make splashy, flashy, and sexy websites that could have been—and often are—designed by a Madison Avenue ad firm. Such sites look nice, but besides being expensive they too often make usability a secondary consideration to appearance. Where once a small agency might spend $500,000 a year on printing, it's now common for it to spend $1 or $2 million on their websites, while still printing some material. (Note: BEA remains a big exception to the norm. Their website eschews expensive graphics and other flashy flourishes, and is mostly easy-to-navigate textual content.)

OK, so it's undeniable that Federal employment has shrunk in the last 40 years, while spending has grown. Doesn't that suggest that Federal employees are much more productive than they were 40 years ago?

Given the data in Chart 1, it's clear that productivity in the Federal sector has risen considerably. However, something must be missing, because it's nearly impossible for an organization to boost output by 50% while cutting its workforce by 30%. In fact, if you lay these data beside analogous ones for the private sector,  it appears that the Feds have been using some secret productivity weapon that they should now share with the private sector, so that it can downsize as the Feds have done. (Oops... no, that would cause a huge recession, actually.)

it appears that the Feds have been using some secret productivity weapon that they should now share with the private sector, so that it can downsize as the Feds have done. (Oops... no, that would cause a huge recession, actually.)

Since 1970, output of private industry has shot up 200%, but this was accompanied by a 70% increase in employment (Chart 4). This means that the gain in private output required 70 percent more workers over this period. If you apply that relationship to the public sector, Federal employment should have increased 15-20 percent to support its 50% growth in output over these 40 years.

So how did they do it? How could the Federal sector manage to increase output by 50% while actually reducing employment? The truth is, they couldn't have done, despite what the data show. For even though the data are correct for what they do measure, they are missing a big component of the puzzle, as you'll see.

The Missing Employment Data

Since Jimmy Carter came to office in 1976, every President except for George H.W. Bush has called for either cuts in or freezes on Federal hiring. This explains why Federal employment has remained flat for 40 years... it has been continuously downsized.1

The drops in defense spending and employment reflect both the end of the Draft and the end of the Cold War.

Given this history, today's calls for cuts in Federal employment are either dishonest and politically motivated, or they are misguided and made by ignorant politicians who have no business being in charge of the Nation's business.

The ugly truth is that for every Federal worker who hasn't been hired since 1970, one or two private-sector employees has been. For most of these 40 years, both the Executive and Legislative branches of the U.S. Government, whether led by Republicans or Democrats, have bought into the notion that "contracting out" (or "outsourcing") Federal jobs was a good way of stretching precious Federal dollars.

Contracting Out

In the context of the public sector, contracting out refers to the act of transferring work previously handled by public employees to employees employed by private contractors. Over time, through workforce attrition, this has the effect of replacing public jobs with private ones.

But this is simply not the case, for two simple reasons, which I plan to take up in a future article on Federal contracting:

- Inefficiency. Outsourcing to private companies is often much more expensive than retaining work inhouse. Briefly, this is the result of:

- Additional Overhead. Most large contracts are subcontracted, and even subcontracts are subcontracted. Each layer adds to the overhead cost of every dollar spent.

- Inflexibility. Getting rid of bad Federal contractors can be as difficult as getting rid of a bad Federal employee.

- Incompetence or dishonesty. Scrutiny of the background and expertise of companies hired by the government is much less exacting than that of potential employees. Too often, companies overstate their qualifications for a particular type of work, overstate costs, or both. Even when the private enterprise is at fault, the government agency loses time as work must be redone, and typically must shell out additional funds for the privilege.

- Lack of continuity. When a company is newly hired to assume an existing task, it's far too easy for them to claim that the outgoing contractor had been "doing things wrong." Without continuity, Federal managers can face unmeasured duplication of costs merely because the new contractor has a different way of doing things. Sometimes a change is warranted, but too often it is not. This kind of waste can also occur when Federal managers change, but that happens far less frequently.

- Conflict of interest. Private contractors are motivated by profit rather than by public service, and therefore should never be in charge of making policy or spending decisions that affect taxpayers. This is a clear conflict of interest situation, where the private company's goal is to make as much money as possible, and the Government's goal is to serve the public as best it can within its limited means.

Even if you don't see it the way we do on Mars, you will surely find it strange—and disturbing—that the Federal Government has absolutely no idea how many employees it has in the private sector.

If you walk through any Federal office today, you won't be able to tell which employees are contractors and which are on the Federal payroll. For all appearances, everyone there is a Federal employee. Yet they're not, and nobody keeps tabs on the ones who aren't, except to make sure they have the appropriate network accounts, desks, computers, and security badges. The Labor Department, which is responsible for collecting the Nation's employment data, has never included this information as part of its surveys.

Among other management consequences of this irresponsible lack of data is that it's impossible to know whether the Federal workforce is "right-sized" or not. It's also impossible to measure relative employment costs, or to compare productivity for the two groups.

And why do we not have these necessary data on private contractors?

First, the Paperwork Reduction Act of 1980—one of a series of misguided deregulation moves in the 1980s designed to get the Federal Government "off the backs" of private companies—made it extremely difficult for Federal agencies to add new questions to their existing surveys. And second, the lack of knowledge has been a mutually beneficial "wink" among cash-strapped Federal managers, cash-hungry private companies, and dishonest/ignorant legislators who want to claim they're cutting costs by keeping a lid on Federal employment.

Only in the last few years has the superiority of outsourcing public jobs been openly questioned, and that's been spurred mainly by concerns about the propriety and cost of contracting by the State and Defense Departments to support the War in Iraq. Yet all through the George W. Bush years, Federal agencies were under extreme pressure to "privatize" or "contract-out" any functions that weren't "inherently governmental in nature."

Inherently Governmental

More-or-less officially, an “inherently governmental function” is one that, as a matter of law and policy, must be performed by federal government employees and cannot be contracted out because it is “intimately related to the public interest.” This definition is quoted from a fairly comprehensive recent report (PDF, 822kb) on the term and its implications, published by the Congressional Research Service in February 2010.

Now, I know what "privatizing" means, ugly word though it may be. But no one—including those pushing hardest for it—can explain what an "inherently governmental" function is. If they were honest, such advocates would admit that any public function that becomes the object of lust by some industry group's lobbyists could not possibly be "inherently governmental," and therefore could be a candidate for privatizing or outsourcing.

To hear these people talk, the only "inherently governmental" jobs are those that make and administer budgets and contracts. That means no jobs for

- Clerks

- Scientists

- Engineers

- Computer specialists

- Designers

- Webmasters

- Economists

- Statisticians

- Audio/Video specialists

- Public affairs specialists

- Writers

- Editors

- Security specialists

- Meeting planners

- Travel planners

- Programmers

- Systems designers

- Accountants

- Budget analysts

- Etc.

This leaves jobs only for

- Lawyers

- Administrators

- Managers

- Budget officers

- Contracting officers

- Personnel officers

Myth of the Coddled Federal Worker

One final piece of the puzzle behind the recent calls for Federal downsizing, workforce attrition, and worker pay caps is the myth that Federal workers cost more than their private-sector counterparts, because of their great benefits. Legislators like Brady love to stick this one in their speeches because it's a guaranteed applause line, especially during great recessions.

Trouble is, it's not true.

I'm going to sidestep the whole debate about whether Federal salaries or higher or lower than comparable jobs in the private sector, because it's too complicated for a few paragraphs and perhaps even for an entire book. There are numerous problems with this analysis, including the difficulty of finding consistent data that tracks all the relevant variables —including worker age, education, experience, location, and job descriptions.

Under President George H.W. Bush, Congress passed legislation that granted Federal workers additional pay under a system of "locality adjustments." President Clinton more or less moth-balled the system, and then set one up that was a pale shadow of the original. Here's a link for more information on the topic.

Since the Civil Service Retirement System (CSRS) was mothballed in 1986, all new Federal workers have been in the Federal Employee Retirement System (FERS). FERS does offer a small pension, but it's nothing like the one CSRS retirees enjoy. In addition, FERS workers pay a much higher portion of their salaries for that pension than CSRS workers did.

Instead, a FERS retirement is heavily dependent on the Federal Thrift Plan, which is nothing more than a 401K program for Federal employees. (Federal workers don't have 401K plans.)

Federal employees have health care, sick leave, vacation leave, and other benefits that are comparable to those in any large U.S. company. I'll never forget moving from a Federal job at BEA to Citibank back in 1996, and finding that Citibank's benefits were superior to those I'd had in the government. Not only that, my pay was almost double, and I didn't have any onerous supervisory responsibilities. Citibank's pension system wasn't as generous as that from CSRS, but it was comparable to that of FERS.

Are Federal benefits better than those of your typical small company? Yes, very likely they are. And, given the vast difference between a Federal agency of 100,000 and your typical small company of 50, the difference is appropriate.

In any case, very few Federal contracts are awarded to your typical small company. At least, not directly. Any small companies that share in contract spending get work only through some "prime" contractor, not directly by some Federal manager.

CSRS was abolished not only to reduce the pay of Federal retirees, but also to add the Federal workforce to the Social Security pool. Under CSRS, Feds neither paid Social Security nor received its benefits on retirement. Under FERS, they do both in the same way that private sector workers do.

Another reason why Federal employees still have a decent package of benefits is that they are represented by a Labor Union, the National Federation of Federal Employees. If workers in U.S. companies get desperate enough, perhaps they'll recall that having a Union on your side is a good thing in the fight for decent pay and benefits. That's a lesson that's been lost over the years, especially since President Reagan started kicking Unions in the butt back in 1982.

However, just because workers don't have the pay, benefits, and pension they should have doesn't make it OK for them to demand cuts for those who do.

And politicians like Mr. Brady should know better.

Big Man in a Tiny Bubble Pops In To D.C.

He arrived from the tiny town of Butler, Pennsylviania, as part of the new freshman class of Angry Republican Congressmen. After all the feting and touring that greeted him in Washington, Mike Kelly was asked who had impressed him the most.

"Nobody," he said.

To be impressed by "nobody" must mean this guy is hugely impressed with himself, one would surmise. Well, yes and no:

"I hope I don't sound arrogant about this, but at 62 years old, I've pretty much seen what I need to see.”

Today's article in the Washington Post doesn't explore what exactly Mr. Kelly has seen in his 62 years, but from his attitude and statements, I would venture to guess it isn't much.

You see, Mike Kelly came to Washington because he is angry that the Federal Government "intruded" on the running of his General Motors car dealership, where he'd spent 56 years of creative energy. (I guess that means he'd been working on the business since he was 6. Just kidding.)

And exactly how had it intruded? Why, it was making him sell Chevrolets instead of Cadillacs.

And exactly why was it ruining his business this way? Well, you see, Obama had (personally) taken over General Motors and was (personally) requiring dealerships to restructure as part of an effort to save the company.

"This is America. You can't come in and take my business away from me. . . . Every penny we have is wrapped up in here. I've got 110 people that rely on me every two weeks to be paid. . . . And you call me up and in five minutes try to wipe out 56 years of a business?”

This is a reasonable attitude if you believe that tiny, parochial self-interest should be the motivator of those elected to run a National Government. However, tiny attitudes from Big Men In Their Local Communities have no place in Congress. Indeed, those with tiny, uninformed beliefs who fail to see the big picture are precisely the ones inclined to take actions that will fail the interest of the public they're elected to serve.

They are also the most vulnerable to corruption, since if you believe that self-interest is the highest good, then you are likely to be impressed by visitors who flatter your ego and your opinions... and then offer to pay you huge sums to ensure your reelection or to sway your vote on an issue that serves your own interest.

A lot of Big Men in Tiny Bubbles like Mr. Kelly were frightened and outraged when the Obama administration offered to buy a 61% stake in General Motors in the summer of 2009. After all, wasn't this a "Government Takeover", or worse, a "Nationalization" of a private company?

If you were inclined to take a narrow view, it was. However, if you bothered to take the big view, it clearly was not.

Obama was a reluctant participant in the process of saving General Motors, and his sin was that he insisted that the taxpayers have some control over the process. Rather than just handing $50 billion to a company that had proven itself incapable of turning a profit and had driven itself into bankruptcy, he stipulated that outside ("Government") experts have a say in how that money was used. The restructuring that resulted is what caused Mr. Kelly such pain in his private bubble.

As an article in The Economist—a business journal with no reputation for supporting Government intrusion into the workings of Capitalism—ended up apologizing to Obama for sharing the view that his action was a mistake:

August 19, 2010. Americans expect much from their president, but they do not think he should run car companies. Fortunately, Barack Obama agrees. This week the American government moved closer to getting rid of its stake in General Motors (GM) when the recently ex-bankrupt firm filed to offer its shares once more to the public (see article).

Once a symbol of American prosperity, GM collapsed into the government’s arms last summer. Years of poor management and grabby unions had left it in wretched shape. Efforts to reform came too late. When the recession hit, demand for cars plummeted. GM was on the verge of running out of cash when Uncle Sam intervened, throwing the firm a lifeline of $50 billion in exchange for 61% of its shares.

Many people thought this bail-out (and a smaller one involving Chrysler, an even sicker firm) unwise. Governments have historically been lousy stewards of industry. Lovers of free markets (including The Economist) feared that Mr Obama might use GM as a political tool: perhaps favouring the unions who donate to Democrats or forcing the firm to build smaller, greener cars than consumers want to buy. The label “Government Motors” quickly stuck, evoking images of clunky committee-built cars that burned banknotes instead of petrol—all run by what Sarah Palin might call the socialist-in-chief.

Yet the doomsayers were wrong. Unlike, say, France’s President Nicolas Sarkozy, who used public funds to support Renault and Peugeot-Citroën on condition that they did not close factories in France, Mr Obama has been tough from the start. GM had to promise to slim down dramatically—cutting jobs, shuttering factories and shedding brands—to win its lifeline. The firm was forced to declare bankruptcy. Shareholders were wiped out. Top managers were swept aside. Unions did win some special favours: when Chrysler was divided among its creditors, for example, a union health fund did far better than secured bondholders whose claims should have been senior. Congress has put pressure on GM to build new models in America rather than Asia, and to keep open dealerships in certain electoral districts. But by and large Mr Obama has not used his stakes in GM and Chrysler for political ends. On the contrary, his goal has been to restore both firms to health and then get out as quickly as possible. GM is now profitable again and Chrysler, managed by Fiat, is making progress. Taxpayers might even turn a profit when GM is sold.

GM's payback to U.S. taxpayers has already begun, and as The Economist notes, the total repayment over time will likely exceed the original $50 billion investment.

Yet Mr. Kelly probably doesn't believe any of this. Why? Because he doesn't want to. It's not in his interest to do so. It's more convenient for him to believe it's all a lie.

After all, to change his mind would invalidate his reason for popping in to Washington. Given his arrogant attitude that he is the most impressive person in D.C., he is hardly the sort to question himself, let alone to burst the tiny bubble that brought him here.

Senate Exposes Gaping Hole in Conflict-of-Interest Law

Just days after I opened an exploration of the way humans view conflict of interest, and how their personal self-interest makes understanding the way this topic is approached in different contexts, the Washington Post publishes a front-page article that exposes the kind of conundrum I'm planning to look into.

The Senate, you see, has no laws restricting the investments its members can make into companies whose fortunes their votes may affect. In particular, they may freely invest in companies that are major players in specific industries overseen by Senate committees. In the Post article, the industry is defense, and the committee typically has "inside knowledge" into the defense systems that will be built, and which companies will benefit from their votes.

This seems strange enough, but as the Post article points out, the Congress has passed laws that prohibit such investments by those appointed to run the agencies — such as Defense — that will let the contracts to carry out the Senate's decisions. Not only that, but such laws have long been on the books to regulate investment behavior by rank-and-file Federal employees.

To Act in My Own Interest, Or Not?

How Humans Deal With Conflicts of Interest (Part 1)

For several years now, I've been troubled by how humans define the concept of "conflict of interest." My concern has grown as I've realized the importance humans seem to place on avoiding "it", or, at times, even the "appearance of it." The more thought I've given to the topic, the more confused I've become. My confusion stems from the observation that whether or not someone has a conflict of interest seems to depend on who is asking the question, what the context is, and whether or not the answer is in that person's own interest or not.

Even more confusing is the paradox whereby humans believe that allowing a conflict of interest can be wrong in case A but right in case B. Again, the paradox may only be resolved if one assumes that the perspective of the believer is what determines the judgment of right or wrong.

Let me be a little more specific.

In most situations where humans raise the spectre that someone may have a "conflict of interest," the implicit notion is that having such a conflict is bad and should be avoided. Examples here are cases where a judge may issue a ruling that is in his own interest but not necessarily that of the conflicting parties. Or where a public official makes spending decisions that stand to benefit himself—or his friends, family, supporters, etc.—but not necessarily those who are supposed to benefit from the spending.

Most people I've talked to seem to think that this notion is obvious—that weighing such conflicts of interest in one's favor is wrong and should be avoided. As will become plain later in this essay, I certainly do not disagree with this notion.

On the other hand, either consciously or unconsciously, most humans in modern, West-European-modeled societies entertain notions of conflict of interest that, to my Martian mind, seem antithetical to the the one they espouse publicly. In this less-than-conscious notion, acting in one's own interest is something that society, instead of outlawing, should actually encourage, since acting in one's own interest is a natural human tendency that can't be legislated away. Not only that, but acting in one's own interest is viewed as ultimately the same as acting in everyone's interest.

This belief is the very basis of the dominant economic system of what are called "Western" societies. Capitalism would be far less effective, it is argued, if people were encouraged to consider anything other than their own interest in making personal choices, such as purchase and investment decisions.

In reading literature that explains the rise and rationale of Capitalism, texts keep returning to a writer called Adam Smith, whose 1776 book, The Wealth of Nations, was particularly influential. Phrases from that book are frequently quoted to explain why the motive of self-interest is so beneficial to a strong Capitalist system. For example:

It is not from the benevolence of the butcher, the brewer, or the baker, that we expect our dinner, but from their regard to their own interest. We address ourselves, not to their humanity but to their self-love, and never talk to them of our own necessities but of their advantages.

The most famous quote from Smith's book on this subject puts the notion of self-interest in a macro foundation he famously labeled "The Invisible Hand":

By preferring the support of domestic to that of foreign industry, he intends only his own security; and by directing that industry in such a manner as its produce may be of the greatest value, he intends only his own gain, and he is in this, as in many other cases, led by an invisible hand to promote an end which was no part of his intention. Nor is it always the worse for the society that it was not part of it. By pursuing his own interest he frequently promotes that of the society more effectually than when he really intends to promote it. I have never known much good done by those who affected to trade for the public good. It is an affectation, indeed, not very common among merchants, and very few words need be employed in dissuading them from it.

Smith's promotion of self-interest as a core virtue in economic transactions became one of the central concepts of Capitalism. Unfortunately, the most influential modern spokesmen for Capitalism seize on self-interest as the rallying cry, neglecting various other central ideas Smith expounded in building his argument. Clearly, it is in the self-interest of the wealthiest and most powerful of a Capitalist society to argue that greed (which itself relies on blind self-interest) is a virtue (or, euphemistically, as a "necessary evil"), but it seems surprising that even humans of modest means agree with them. And none of those who subscribe to this argument perceive the central Martian concept that making the pursuit of one's personal interest the foundation of a society's culture is ultimately—and, apparently to most humans, unintuitively—counter to one's ultimate interests.

Again, though Smith is pilloried by many humans who oppose laisse-faire Capitalism, he is hardly the demon of self-centered greed that most of his ardent followers are today. In his first major book, The Theory of Moral Sentiment, which he “always considered ... a much superior work to his Weaith of Nations,” Smith explains that the pursuit of wealth and power is not a worthy goal in itself. Referring to the universal human desire for respect and acclaim by one's peers, Smith writes:

Two different roads are presented to us, equally leading to the attainment of this so much desired object: the one, by the study of wisdom and the practice of virtue; the other, by the acquisition of wealth and greatness. Two different characters are presented to our emulation: the one, of proud ambition and ostentatious avidity; the other, of humble modesty and equitable justice. Two different models, two different pictures, are held out to us, according to which we may fashion our own character and behaviour; the one more gaudy and glittering in its colouring; the other more correct and more exquisitely beautiful in its outline: the one forcing itself upon the notice of every wandering eye; the other, attracting the attention of scarce any body but the most studious and careful observer. They are the wise and the virtuous chiefly, a select, though, I am afraid, but a small party, who are the real and steady admirers of wisdom and virtue. The great mob of mankind are the admirers and worshippers, and, what may seem more extraordinary, most frequently the disinterested admirers and worshippers, of wealth and greatness.

When Smith was formulating his philosophy in the late 18th Century, Christianity defined the dominant moral code in Western Europe—in both religious and political spheres of society—so his ideas naturally reflected that influence. And the idea at the core of Jesus Christ's teachings is that humans should reject self-interest and embrace an affection for one's neighbors and fellow planet dwellers as the highest virtue. At least in his writings, Smith states a contrary, more truly Christian view, which clearly counterbalances the promotion of self-interest in his overall life view:

And hence it is, that to feel much for others and little for ourselves, that to restrain our selfish, and to indulge our benevolent affections, constitutes the perfection of human nature; and can alone produce among mankind that harmony of sentiments and passions in which consists their whole grace and propriety. As to love our neighbour as we love ourselves is the great law of Christianity, so it is the great precept of nature to love ourselves only as we love our neighbour, or what comes to the same thing, as our neighbour is capable of loving us.

That the core teachings of Christianity run so contrary to human nature explains how so many humans can call themselves Christians while simultaneously worshiping at the altar of self-interest, working feverishly to accumulate personal wealth and power—seemingly to the exclusion of all other concerns. Many vocal leaders of the Christian churches provide an easy, self-interested rationale to justify this hypochrisy. In particular, a recently deceased evangelist called Oral Roberts is often cited as the founder of televangelism, based on the notion that there is no moral conflict between the pursuit of wealth and a belief in the teachings of Christ. Roberts apparently based his misguided philosophy on this passage from the Bible's Third Epistle of John:

I wish above all things that thou mayest prosper and be in health, even as thy soul prospereth.

Roberts wasn’t shy about sharing the story whereby as a struggling 29-year-old pastor, he read this passage, decided it meant that being rich was a worthy goal, and, as if to celebrate, bought himself a new Buick the following day.

How could any Christian take Oral Roberts seriously? On Mars, it's clear that a philosophy such as Roberts’ is nothing but a self-serving misdirection from the teachings of the religion's founding prophet. What I didn't understand until recently is that humans who choose to follow ministers like Roberts and his many emulators are simply not interested in being Christians, except in name. Such are delighted to realize that their religion spares them the agony of always keeping their self-interest in check.

This essay is the first of a series that will explore some specific cases where Western societies legislate to prevent "conflict of interest," and perhaps more interestingly, where they do not. The cases will be examined in the light of the way self-interest is perceived by individual humans, as well as by humans grouped into various, possibly overlapping, personal and business relationships.

In reporting these ideas to my Martian peers, I am particularly interested in trying to sort out how humans rationalize the conflict between their own personal interests and the interests of broader layers of society. Is there a boundary that defines the point at which a human will give up pursuing his own self-interest and throw his lot in with the interest of a larger group? If so, there are probably different boundaries for the human clusters of increasing size that radiate outward to encompass the entire planet.

Is there a point beyond which the majority of humans will not pass if it means abandoning their own interests? Or does everyone eventually perceive the point at which personal interests become irrelevant, and mutual interests merge?

Some humans reading this will undoubtedly argue that all of this is perfectly obvious, and such an exploration a waste of time and an unworthy intellectual pursuit.

To those I say, please understand how we Martians think. On a fundamental level, Martian culture reflects some notions that are viewed as "naive" by humans who express their thoughts charitably, or as "sucker-bait," "gullible," or "dupable" by those who don't.

- Before making any decision, from the personal level on up, Martians are expected to consider the decision's possible repercussions on fellow Martians and on the planetary resources on which they depend. The idea of having to make laws to enforce the runaway pursuit of one's self-interest is quite foreign.

- Outside of the pursuit of pleasure, knowledge, and family harmony, the primary motivation of Martians is finding a life's work that suits one's personal gifts, and then working as hard as possible to make sure that the products of one's labor reflect the highest quality one can achieve. It is believed that in this way, one will naturally be rewarded by success and by enough wealth to ensure happiness.

Possible future sources of inquiry in this series include:

- Consider a case where a private company is awarded a contract by a national government agency to help fulfill part of its basic mission. Clearly, the agency's interest is in fulfilling its mission as best it can within the constraints of its budget and its spectrum of resources. The agency's interest, however, is not the same as that of a private contractor, whose primary interest is in maximizing profit.

- When lawmakers for the U.S. Congress make laws, whose interests are they serving? If their expensive campaign was financed by certain private groups, companies, or industry associations, isn't it in the lawmaker's interest to promote the interests of these financiers? If so, what impact does the interest of the broader mass of the legislator's voters make in decisionmaking? What if the lawmaker disagrees with the views of those who financed his campaign? And to what groups does the lawmaker refer when he inveighs against the "special interests"?

- Suppose you're a Congressman who is asked to vote on a law that would reduce your income opportunities, while also restricting your access to fundraisers and lobbyists? If the majority of legislators were to make self-interest the guidepost of their decisionmaking, such a law would never be passed.

- Is it appropriate for a profit-motivated company to be responsible for activities whose purpose is to promote the general welfare? This is a fairly common arrangement in the United States, as far as I can see, but it strikes me as a potentially disastrous conflict-of-interest situation. Obvious examples are private companies engaged in providing basic health care or education to the public.

- What about the widespread situation where a monopoly company, or an oligopoly of companies, fulfills societies basic needs for infrastructure—such as electricity, inter-networking, water, and services distributed by radio waves? How about roads, bridges, airports, rail systems, and air travel? To what extent can these infrastructure requirements be compromised if fulfilled by companies motivated solely by profit?

That's it for now. More later.

A Gift for Self-Deception

For a long time now, I've been explaining why the world would have been better off if Apple's computers had come to dominate homes and businesses. I've focused on the virtues of Apple's software almost exclusively, even though Apple has for most of existence been primarily a hardware company, like Dell or Hewlett Packard. Why? Because it's clear to all us Martians that what makes or breaks a computing experience is the software. To paraphrase one of your ex-Presidents, "It's the Software, stupid!"

For a long time now, I've been explaining why the world would have been better off if Apple's computers had come to dominate homes and businesses. I've focused on the virtues of Apple's software almost exclusively, even though Apple has for most of existence been primarily a hardware company, like Dell or Hewlett Packard. Why? Because it's clear to all us Martians that what makes or breaks a computing experience is the software. To paraphrase one of your ex-Presidents, "It's the Software, stupid!"

I've also come to believe that humans are genetically predisposed to self-deception, allowing them to talk themselves into whatever point of view is most convenient, or is perceived as being in their best self-interest. Thus, argument over the relative worth of one technology or another is pointless, because no carefully researched and supported set of facts will ever be enough to persuade someone with the opposite view. Indeed, the truth of this axiom is encapsulated in the common human phrase of folk wisdom,

"You can lead a horse to water, but you can't make him drink."

I've noted that when someone conjures this phrase to explain a colleague or acquaintance's intransigence about something, those listening will nod to each other knowingly and somewhat sadly aver, "So true."

And yet, how many humans really think they're as "stupid" as horses?

The only time a change of opinion occurs is when some circumstance in a person's life changes sufficiently that what was highly dubious before is now patently obvious. This is why you read so many stories of former PC users who, when confronted with the necessity of using a Mac for a period of time, invariably come to understand how far beyond superior the Mac operating system is when compared with Windows.

I spend little time using Windows nowadays, but my wife is still forced to use a PC for her job. As we both work at home, I have become her de facto Help Desk support for tasks that her remote technicians can't handle. So it was that today I managed to raise my green blood pressure far too high for sustainable health, all in the cause of trying to get a scanner to work with her Dell laptop.

Working with Windows is a lot like trying to communicate with automated phone systems. One menu will explain a variety of choices. Then, you find that either none of them are helpful, or some of them promise more than they deliver. For example, in this case Windows let me know that I had attached a new piece of hardware. (Duh!) Then it offered options to (a) let it try to find the driver on its own or (b) insert a CD that contains the driver. I was skeptical of option (a) but decided to try that. Well, of course Windows came back almost immediately to tell me it couldn't find the driver.

On a Mac? Apple keeps hardware drivers current with all of its OS releases, including incremental updates, and I've almost never had to go searching for a driver for common hardware like scanners and printers. (A Windows user at this point will self-deceptively point out how much more hardware is available for the PC, etc. All I can say is, Mac users have more than enough choices in hardware peripherals, thanks.)

Step two was so infuriating that I refuse to explain it in detail. This involved finding and downloading Canon's driver and software. The finding part was easy as pie thanks to Google and Canon's easy-to-use website. The downloading and installation parts, however, were beyond maddening. The experience exposed so many obvious weaknesses in Windows usability that I had to again wonder how PC users put up with it. I said I wasn't going to go into detail, and I'll try not to. But here are a few observations:

- Clicking download doesn't just download the file, as it does on a Mac. Instead, it spawns a dialog box that requires a choice: Download, or "Run". So, I ran. (Again, a Windows guru would say, "But you can avoid having to make that choice each time by..." And I say, "Yes, but you forget how clueless most computer users are. Even though you can do this, it's not the default experience that it should be.")

- So, after running, nothing happened. Nothing. I thought I'd done something wrong, so I downloaded again. My wife noted that Canon's site suggests saving the file rather than running it, so I did that. But where to save it? From the file browser it took far longer than it should to locate the Desktop, which I assumed would be the default location. Even if it's the default, I had to manually choose it. *Groan*

- So once the file was downloaded, I just wanted to click it on the desktop. Guess what? There's no obvious way to expose the desktop. My wife, a 20-year PC user, says she always minimizes all the windows to get there. Good grief. Think of all the lost time in corporate America with clueless users trying to find their desktop. Scary.

- Having installed, I then had to go through another wizard that wanted to help me help Windows connect the hardware with the driver. To get to the wizard, I had to find the control panel for scanners, another task that all its own makes using Windows look hard from a Mac perspective.

Why does this seem ridiculous to Martians? Simply because, using Mac OS X, you just plug your scanner in and... there's no step two. The Mac's built-in Twain driver typically can pair with the scanner even if the company-specific scanner is unavailable. And since this is a core service of the operating system, it works with any Twain-aware software. Isn't that an obvious approach?

This lengthy and agonizing task (don't even get me started on the Windows user interface, and I'm not talking about its relative beauty) reminded me of another tragedy of modern computing, which I've written about before. Namely, the institution of a "Help Desk" in all companies today is not one of the inevitable costs of having computers on every desk. It is quite obviously the result of having IBM PCs running DOS or Windows computers on every desk.

The process of setting up a scanner should be in the skill range of every computer user. In the Mac world, it is. In the PC world, it isn't. It's as simple as that. And you can extrapolate that observation to nearly every other aspect of office computing we have today.

The Help Desk is a huge revenue drain that every PC user simply assumes is necessary, because it has evolved to be so. Today, Help Desks are self-perpetuating organizations, typically driven by contract companies with a clear incentive to make themselves seem indispensable. These folks (or at least, the companies they work for) are at the forefront of the anti-Mac coalition devoted to doing whatever it takes to keep Macs out of the enterprise.

And who is the company that hires the Help Desk to question what the "experts" say? After all, these are the guys who daily keep their computing environment running. Business managers simply aren't qualified to make decisions about their computing infrastructure, so they rely on outside contractors for recommendations. And guess what? Those are the same guys who regularly argue for expanding the Help Desk and who regularly explain why it would be a mistake to let employees start using Macs at the office. (For more on this subject, refer to the third section of my earlier article, Protecting Windows: How PC Malware Became A Way of Life. The third section is called "Change Resisters In Charge.")

In this case, the advocates for the Help Desk aren't deceiving themselves. Many of them fully understand that if Macs came in, many of their jobs would go away. But somehow, the business managers and computer users continue to spend most of their time struggling with simple tasks rather than actually getting work done, all because they're convinced they have no choice. And having to use Windows, the average user continues to perceive their PC as this unpredictable, inscrutable, frustrating device whose only virtue appears to be access to the Web and to iTunes.

I'll never forget my highly intelligent disk jockey friend who purchased a high-end PC with all the bells and whistles for recording and editing audio and video. Not only did it cost more than an iMac with the same basic capabilities, but it sat in his house for over a year before he had the nerve (and time) to figure out how to use it to do the things he bought it for.

I tried to explain to him that... But you know how it goes. Tell a PC user how simple something like recording and editing audio is on a Mac, and either their eyes glaze over or they start to look at you suspiciously. And that's if they're already a friend!

But I'm done with trying to persuade humans of anything. They'll either figure it out, or they won't. Unfortunately, another observation I've made isn't good news for any human figuring out that they're wrong about something:

Changes in human understanding, and the policy implied by that understanding, only occur through crisis.

This observation is directly related to the original premise, because if it's impossible ever to "prove" an idea or even a set of facts to another human or group of humans through cogent argument, how do you manage to change awareness of the virtue of alternative perspectives? I'm taking back to Mars the theory that such changes are only possible after a human undergoes some life-changing crisis, or after a community of humans does the same.

In a followup essay, I'll discuss several other current controversial topics that have quite obvious answers, yet which humans--quite often on both sides of the debate--keep viewing from obviously kooky perspectives.

Well, obvious to any Martian I know, anyway.

Recognizing Self-Evident Truths

This being that most political of years, serious issues of national significance have been on my mind. Sadly, judging from the typical discourse I see Americans engaged in, I can only conclude that most humans seem to think it's best to just ignore serious issues. Why is it that people read body language more seriously than they do written language? And why is it, after so many years of evolution, a pretty face or the color of one's skin is more influential than what that candidate has to say about--oh, you know, energy policy, health care reform, global warming and environmental concerns, economic insecurities, abortion, and so on.

There was an article in the Washington Post recently that finally expressed what has been obvious to me for many years now: Humans have become so cynical that they honestly believe everything is an opinion. There are no facts. If you don't like a particular fact someone presents you with, you simply respond, "Oh, you think everyone should just agree with you!" And likewise, if someone presents you with a lie that you like, you are quite willing to take it as gospel.

There's no facing reality... no desire to really debate issues using facts. Heck, I'm beginning to think that too many Americans don't even know what a fact is. Here's a simple definition:

Fact: The truth about events as opposed to interpretation.

Ah, but now we enter a realm that, for many humans, presents great difficulty: What is Truth?

It is a question that has reverberated throughout the Western world ever since Pontius Pilate asked the question of Jesus. Jesus had referred to a truth, and Pilate's question suggests that he doesn't believe there is such a thing.

But of course, there is. That my cat ran away the day we moved to our new house is a fact. That my wife and I have been married now for almost 25 years is a fact. I have two sisters. That is also a fact.

Extending these to more difficult lines of inquiry, it's clear that changes in earth's atmosphere are causing global temperatures to rise, for the Arctic ice cap to melt, for glaciers around the world to disappear, and for the incidence of hurricanes and droughts to increase. These are facts, and nearly all scientists today agree that the inference from these facts is that Global Warming is a fact. It is the truth, even if it's extremely inconvenient.

On Presidential Lies

Likewise, it is a fact that the Republican candidate for Vice-President, Sarah Palin, did not oppose the "Bridge To Nowhere," as she claims. She ran for Governor on her support for the bridge, as a matter of fact. Only after Congress tabled the earmark Palin wanted for the bridge did she switch sides. Can you say "disingenuous?" She also didn't sell the Governor's jet on eBay, as John McCain has claimed.

In fact, Sarah Palin and her fellow candidate, John McCain, are going down as the most dishonest folks who ever ran for the Presidency (in my lifetime, at least). Think that's hyperbole? I'm sorry to say that it's not. Every day, more evidence of their willingness to bend the truth backwards is showing up, resulting in nearly daily outcries in U.S. newspapers:

- Running on a Lie (Washington Post, 9/16/08)

- The Odd Lies Of Sarah Palin II: The Bridge To Nowhere (Atlantic Online, 9/15/08)

- Campaign check: Lies and half-truths outed (San Francisco Chronicle, 9/13/08)

- Campaign of lies disgraces McCain (St. Petersburg Times, 9/14/08)

- McCain has become a serial liar (SeattlePi, 9/15/08)

- Press picks over litter of lies on the Palin trail (Sydney Morning Herald, 9/16/08)

- New election low: distorting the fact-checking (Los Angeles Times, 9/12/08)

- McCain: Mr. Straight Talk? (MSNBC, 9/12/08)

- Ringing Untrue, Again and Again (New York Times, 9/17/08)

- True Whoppers (Washington Post, 9/17/08)

And the list goes on and on... just search through Google News some time, and you'll see what I mean. Even though this year's persistent falsehoods are the worst yet, there have been plenty of the same by previous Presidents and their staffs. In fact, I'd argue that it's Presidential Lies that got us where we are in the first place. It all started with President Johnson lying about the Vietnam War, followed closely by Richard Nixon lying about the Vietnam War. And then the real whopper that really made Americans suspicious of their leaders: Watergate. But those observations lead to a huge digression that I should leave for another time.

Here are a few examples of lies told by recent U.S. Presidents:

Disingenuous: Not candid or sincere, typically by pretending that one knows less about something than one really does.

- John McCain: He continues to repeat the plain untruth that Barack Obama's tax plan would raise everyone's taxes. This scare tactic usually works, whether it's true or not. In this case, McCain knows it's a lie, yet he keeps saying it. As a matter of fact, Obama's tax plan would only raise taxes for the top 1% of America's richest. For every household that makes less than $250,000 a year, Obama's plan makes quite substantial tax cuts, whereas McCain's plan does not. As with Bush's deficit-busting tax cuts early in his term, McCain's cuts would benefit only the very rich and the corporations they run.

- George W. Bush: Hmmm... Let's see, there have been so many lies, told so well, that on Mars we've determined he's lied more than any President in U.S. history. Everyone knows by know---as a fact---that Iraq never had "weapons of mass destruction," nor did Saddam Hussein have anything whatsoever to do with the terrorist attacks of September 11, 2001. There are hundreds of documented lies by Bush and his administration in support of the larger one, but here's a good one. On October 22, 2002, as the public relations effort to sell the Iraq war to U.S. citizens was heating up, The Washington Post published an article whose title says it all: For Bush, Facts Are MalleableFor Bush, Facts Are Malleable, which cited two lies in two paragraph:

In the president's Oct. 7 speech to the nation from Cincinnati, he introduced several rationales for taking action against Iraq. Describing contacts between al Qaeda and Iraq, [David, Bush] cited "one very senior al Qaeda leader who received medical treatment in Baghdad this year." He asserted that "we have discovered through intelligence that Iraq has a growing fleet" of unmanned aircraft and expressed worry about them "targeting the United States."

Bush's statement about the Iraqi nuclear defector, implying such information was current in 1998, was a reference to Khidhir Hamza. But Hamza, though he spoke publicly about his information in 1998, retired from Iraq's nuclear program in 1991, fled to the Iraqi north in 1994 and left the country in 1995. Finally, Bush's statement that Iraq could attack "on any given day" with terrorist groups was at odds with congressional testimony by the CIA. The testimony, declassified after Bush's speech, rated the possibility as "low" that [Saddam Hussein] would initiate a chemical or biological weapons attack against the United States but might take the "extreme step" of assisting terrorists if provoked by a U.S. attack.

- Bill Clinton: "I did not have sexual relations with that woman, Miss Lewinsky." Yes, that was a lie, unless you don't consider oral relations "sexual." And wow, did Bill pay for that one! Indeed, he was actually impeached for that lie... which, unlike the lies of the Presidents who preceded and successors, had zero impact on the health and welfare of the Nation. From my perspective on Mars, it's inconceivable that one President could waste $500 billion on a war the rationale for which he brazenly lied about, and yet receive no punishment whatsoever, while another President had a brief sexual liaison with another consenting adult and lied about it, a sin that led to his being impeached, for only the second time in U.S. history.

- George H.W. Bush: George H. W. Bush's best known lie is, of course, "Read my lips, no new taxes." He said that during the campaign for President, and then proceeded to break that promise. But a far more serious lie is the one he repeatedly told the American people about negotiating with terrorists:

Only problem is, even as he made such pronouncement, he and the Reagan administration were secretly selling arms to Iran, in exchange for the release of hostages. They then turned around and supported the Nicaraguan Contra rebels with the profits from the secret Iran sales. This is all a matter of public record... it is fact, and yet, perhaps because of the complexity of the issues, or perhaps because of the popularity of Ronald Reagan and the transition of his administration to that of George H.W. Bush, the lie and the secret deal managed to wash over Americans' minds without really registering.Today I am proud to deliver to the American people the result of the six months effort to review our policies and our capabilities to deal with terrorism. Our policy is clear, concise, unequivocal. We will offer no concession to terrorists, because that only leads to more terrorism. States that practice terrorism, or actively support it, will not be allowed to do so without consequence.