News Posts In Category

In search for civility online, is the Golden Rule the answer?

Bye Bye, Google

“Just Say No To Flash”

Join The Campaign! Add A Banner To Your Website

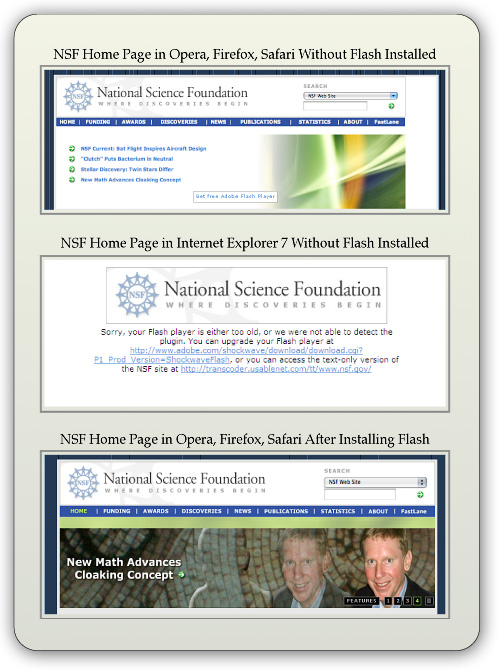

In the past few years, Adobe Flash has become more than an annoyance that some of us have kept in check by using "block Flash" plugins for our web browsers. More and more, entire web sites are being built with Flash, and they have no HTML alternative at all! This goes way beyond annoying, into the realm of crippling.

In the past few years, Adobe Flash has become more than an annoyance that some of us have kept in check by using "block Flash" plugins for our web browsers. More and more, entire web sites are being built with Flash, and they have no HTML alternative at all! This goes way beyond annoying, into the realm of crippling.

I had noticed the trend building for quite awhile, but it only really hit home when I realized that Google, of all companies, had redesigned its formerly accessible Analytics site to rely heavily on Flash for displaying content. This wouldn't be absolutely horrible except for the fact that Google provides no HTML alternative. I tried to needle the company through its Analytics forums, but only received assurance that yes, indeed, one must have the Flash plugin running to view the site.

Keep in mind that content like that on Google Analytics is not mere marketing information, like the sales pitch on the Analytics home page.

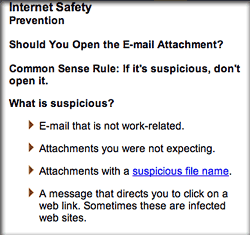

Those of us who are disturbed by the trend need to be a bit more vocal about our opinion. Hence, I'm starting a "Just Say No To Flash!" campaign, with its own web page, graphics for a banner, and the CSS and HTML code to deploy it on your own web pages.

I've mentioned this to some of my family and friends, and they often come back with: "So, Why should I say no to Flash?" I admit that as a power browser and a programmer geek type who, shall we say, makes more efficient use of the web, I'm more keenly aware of the ways that Flash is chipping away at the foundation of web content.

In the beginning, it seemed harmless: Flash was an alternative to animated GIFs, and an easy way to embed movies on web pages. But then advertisers wrapped their meaty mitts around it, and that's when Flash started to be annoying. However, one could block Flash in the browser, as part of a strategy of shutting out obnoxious advertising.

But publishing content via Flash is just wrong, for a number of reasons.

➠It's A Proprietary Technology

I don't think Flash is what Tim Berners-Lee had in mind when he created the first web browser and the markup language called HTML to run the web. Then, as now, the web is meant to be open to all. It is meant to be built using open standards that belong to no individual or company. The main open formats that should be used to build websites are simply:

- HTML

- CSS

- JavaScript

- Images (open formats)

Open standards for video, audio, vector graphics, virtual 3D graphics, animated graphics, and others are also available to be thrown into the mix.

Adobe PDF is also a common format for distributing final-form documents, and PDF is based on open specifications for both PDF and PostScript that Adobe published back in the 1990s.

➠ It Isn't Backwards-Compatible

If you install a Flash plugin today, there's no guarantee you'll be able to view Flash content created 2 months from now.

If you have a Flash plugin from 5 years ago, it's probably useless today.

Flash is designed with built-in obsolescence, forcing users to repeatedly visit the Adobe website to get an upgrade. This is not only a bother, it forces one company's advertising into the world's face every time it releases a software update.

➠It Can't Be Customized

From time immemorial (well, at least since the beginning of web time), a web page's text could be customized to suit the user's taste and needs. All web browsers provide the tools to increase/decrease the font size, as well as to specify custom fonts for different page elements (headers, paragraphs, etc).

Flash throws all of that out the window with a terse shrug, "Let 'Em Eat Helvetica 10pt."

➠Its Content Is Inaccessible

No, you can't drag and drop images or text from Flash content. This most basic method of interacting with a web page—dragging images off the page, or selecting sections of the page to drag onto an email or text processor—is a non-starter if it's part of a Flash file.

Copy and paste? If the Flash programmer has been thoughtful, you should be able to copy and paste text. But don't even try to copy any other page element.

And that includes copying a link's URL. Right-click (Ctrl-click) anywhere in a block of Flash content, and you get the standard Flash popup menu. Not very helpful.

➠You Can't Save The Page

Another common task many web users take for granted is the ability to save a web page as text, as HTML, or as a format like rich-text format. With Flash, this is impossible.

You may be able to save the file as a web archive, but there's no open standard for a "web archive," and getting at the content inside one is almost as hard as getting inside a Flash movie.

➠Flash Consumes More Of Your Computer

When I'm running Flash — as I am now while shopping at Adobe — my Activity Monitor shows it's consuming a continuous 5-percent of my processing power, and about 130 MB of my RAM.

For What? There's nothing a Flash movie can deliver that can't be delivered using open formats. its heavy resource drain is one reason I keep Flash turned off when browsing the web.

➠You Can't View Flash on an iPhone or iPad

Apple has very good reasons for not supporting Flash on its tiny devices. As the previous point makes clear, Flash isn't a delicate, lightweight technology that your processor and RAM won't notice.

When trying to build hardware and software for small devices that work well and don't lead to memory problems or application crashes, why wouldn't you ditch unnecessary technologies like Flash?

Obviously, Steve Jobs stepped into a hornets nest here, but I think the hornets were wrong.

Make Your Site Say No To Flash

It's easy! Just follow these two steps:

1. Download the Image(s)

You can copy and save one of the following images, or download the Photoshop source and make your own.

2. Add the CSS

Here are two CSS styles for positioning the Just Say No To Flash banner on your web page. One positions the banner at the top-right, and the other at the bottom-right. To use the styles, just copy and paste the following code into the <HEAD> portion of your HTML.

<style>a#noFlash {position: fixed;z-index: 500;right: 0;top: 0;display: block;height: 160px;width: 160px;background: url(images/noFlashTR.png) top right no-repeat;text-indent: -999em;text-decoration: none;}</style>

<style>a#noFlash {position: fixed;z-index: 500;right: 0;bottom: 0;display: block;height: 160px;width: 160px;background: url(images/noFlashTR.png) bottom right no-repeat;text-indent: -999em;text-decoration: none;}</style>

3. Add the HTML

Add the following to the beginning of your HTML, just below the <BODY> tag, or at the end, just before the closing </BODY> tag:

<a id="noFlash" href="http://www.musingsfrommars.org/notoflash/" title="Just Say No To Flash!"> Just Say No To Flash! </a>

Please always link your image to http://www.musingsfrommars.org/notoflash/ so everyone can find the information associated with the image.

Thanks to the "Too Cool for Internet Explorer" campaign run by w3junkies for the concept behind "Say No To Flash," as well as for the general outline of information that campaign provided.

Compass: A New Concept for Managing CSS Styles

Taking a Snapshot of the Semantic Web:

Mighty Big, But Still Kinda Blurry

It's still somewhat difficult to get a handle on exactly what is meant by the "Semantic Web," and whether today's technologies are truly able to realize the vision of Tim Berners-Lee, who first articulated it back in 1999. From what I've read, I think there's general agreement that we aren't even close to being "there" yet, but that many of the ongoing Semantic Web activities, technologies, development platforms, and new applications are a big leap beyond the unstructured web that still dominates today.

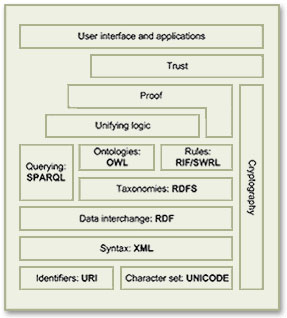

There is a huge, seemingly endless amount of work being done by thousands of groups all trying to contribute to making the Semantic Web a reality. In my few weeks of research, I still feel as though I've just stepped my toe into that vast lake of semantic experimentation. Partly as a result of the many disparate projects, however, it does become rather difficult to see the entire forest for all the tiny trees. That said, these thousands of groups do appear to be working more or less together on the basis of consensus-based open standards, and they have set up mechanisms to keep everyone abreast of new ideas, solutions, and projects, under the general leadership of the World Wide Web Consortium (W3C)'s Semantic Web Activity. As a starting point for exploration into this topic, the Wikipedia article that describes the Semantic Web Stack is quite good. Among its good overview and many useful links, the article includes the original conception of the Stack as designed by Berners-Lee.

Besides cataloguing the sheer number of different projects all tackling different aspects of building a Semantic Web, it's important to distinguish ongoing projects from those that expired years ago—a distinction that's not always readily apparent to those peering in from the outside. Even excluding these, there are far too many projects to read up on in a few weeks, so this snapshot is necessarily incomplete. But after having the content reviewed by some Semantic Web experts, I'm confident it includes all the most significant threads of this new web, which, as Berners-Lee envisioned it:

As a starting point for exploration into this topic, the Wikipedia article that describes the Semantic Web Stack is quite good. Among its good overview and many useful links, the article includes the original conception of the Stack as designed by Berners-Lee.

Besides cataloguing the sheer number of different projects all tackling different aspects of building a Semantic Web, it's important to distinguish ongoing projects from those that expired years ago—a distinction that's not always readily apparent to those peering in from the outside. Even excluding these, there are far too many projects to read up on in a few weeks, so this snapshot is necessarily incomplete. But after having the content reviewed by some Semantic Web experts, I'm confident it includes all the most significant threads of this new web, which, as Berners-Lee envisioned it:

I have a dream for the Web [in which computers] become capable of analyzing all the data on the Web – the content, links, and transactions between people and computers. A ‘Semantic Web’, which should make this possible, has yet to emerge, but when it does, the day-to-day mechanisms of trade, bureaucracy and our daily lives will be handled by machines talking to machines. The ‘intelligent agents’ people have touted for ages will finally materialize.In my tour of the Semantic Web as it exists today, it's interesting to note that most of the projects are geared not toward machine-to-machine interaction, but rather to the traditional human-to-machine. Humans being by nature anthropocentric, the first steps being taken toward Berners-Lee's vision are to build systems that are semantically neutral with respect to human-to-human communication. Once we can reliably discuss topics without drifting off into semantic misunderstandings, then perhaps we can start teaching machines "what we mean by" ... This paper is an attempt to assess the current state of today's steps, while compiling a list of resources that would prove useful to someone thinking about building a Semantic Web application in 2009. Challenges to Building Semantic Web Applications The process of applying concepts from the Semantic Web to build richer, more knowledge-oriented applications presents developers with several, somewhat challenging prerequisites:

- Taxonomies for the content being published,

- Ontologies for the content, based on the developed taxonomies,

- Content tagged using the developed ontologies,

- Database tools for storing and serving RDF and/or OWL ontologies,

- Database tools for connecting ontologies with the content they describe,

- Application server specializing in querying and formatting semantic content,

- User interface tools to present semantic content in optimum, not necessarily traditional, ways.

- Ontology Development Tools

- Application Development Tools

- Database Tools

- Application Servers

- Semantic Application Demos

- Semantic Website Enhancements

- Other Resources

Ontology Development Tools

Protege- Comes in two "flavors": Version 3.4 handles both OWL and RDF ontologies, while 4.0 is geared toward the latest OWL standards only.

- Impressive software for creating OWL ontologies.

- User interface is well organized, given the complexity of the objects and properties you're dealing with. The interface also must handle multiple views of the information, and it does so quite well.

- Numerous plugins for Protege make specific task work easier. There are many more plugins for Protege 3.4 than for 4.0 at this time.

- One plugin enables database connections, with which you can import entire databases or tables, including their contents. Tables typically become OWL objects, and columns become object properties. Impressively, this tool also creates a complete form with which you can enter new instance information. Each form field can also be customized after creation.

- Protege can also export ontologies to "OWL Document" format, which is a browsable HTML representation of the ontology.

- Stanford is developing a web-based version of Protege. The beta URL is at Web Protege.

- OntoLT. The OntoLT approach aims at a more direct connection between ontology engineering and linguistic analysis. Used with Protege, OntoLT can automatically extract concepts (Protégé classes) and relations (Protégé slots) from linguistically annotated text collections. It provides mapping rules, defined by use of a precondition language that allow for a mapping between linguistic entities in text and class/slot candidates in Protégé. (This plug-in is only available for Protege 3.2.)

- There are a wide array of plug-ins for Protege 3.2, and a much smaller set for 4.0. This page from the "old" Protege wiki has good links to the full library of Protege plug-ins.

- Ontowiki is a tool providing support for agile, distributed knowledge engineering scenarios. It facilitates the visual presentation of a knowledge base as an information map, with different views on instance data. It enables intuitive authoring of semantic content, with an inline editing mode for editing RDF content, similar to WYSIWIG for text documents. Ontowiki is built on the Powl platform. I have downloaded and installed an instance of Ontowiki on my home computer; the installation and configuration was quite simple.

- An online demo of Ontowiki is available.

Application Development Tools

The list in this section is just a small subset of the tools now available for building Semantic Web applications. There are several complete, continuously updated lists on the web, including those at SemWebCentral and the Semantic Web Company. Developer Resources- SemWebCentral is an Open Source development web site for the Semantic Web. It was established in January, 2004 to support the Semantic Web community by providing a free, centralized place for Open Source developers to manage Semantic Web software and content development. Another purpose is to provide resources for developers or other interested parties to learn about the Semantic Web and how to begin developing Semantic Web content and software. SemWebCentral has the following major portals:

- Web Tools by category, a list of 148 projects organized by topic and a wide variety of other attributes.

- Code snippets, an archive of code snippets, scripts, and functions developers have shared with the open source software community.

- Learn About the Semantic Web, a collection of overviews, tutorials, and papers covering Semantic Web topics.

- Programming With RDF is part of the RDF Schemas website. It has links to repositories of programmer resources by programming language, showing the kind of documentation, code, and tutorials covered by the repository.

- Semantic Web Tools is a comprehensive list of over 700 developer tools now available for semantic-web-related projects. There are several such lists on the web, but this one is particularly good since it breaks the list down by category and language, making it much easier to narrow down the list you're interested in. This site is hosted by the Semantic Web Company.

- Developers Guide to Semantic Web Toolkits collects links to Semantic Web toolkits for different programming languages and gives an overview about the features of each toolkit, the strength of the development effort and the toolkit's user community.

-

- Extensions and Plugins

- Rio, a set of parsers and writers for RDF that has been designed with speed and standards-compliance as the main concerns. Currently it supports reading and writing of RDF/XML and N-Triples, and writing of N3. Rio is part of Sesame, but can also be downloaded and used separately.

- Elmo is a toolkit for developing Semantic Web applications using Sesame. Elmo wraps Sesame, providing a dedicated API for a number of well known web ontologies including Dublin Core, RSS and FOAF. The dedicated API makes it easier to work with RDF data for the supported ontologies. Elmo also offers a set of tools related to the supported ontologies, including an RDF crawler, a FOAF smusher and a FOAF validator.

Sesame is an open source Java framework for storing, querying and reasoning with RDF and RDF Schema. It can be used as a database for RDF and RDF Schema, or as a Java library for applications that need to work with RDF internally. Sesame is extremely flexible in how it's used and can work with a variety of data stores, including relational databases and native RDF files. It can be deployed as a server, or as a library incorporated into another application framework. For example, Sesame can be used simply to read a big RDF file, find the relevant information for an application, and use that information. Sesame provides the necessary tools to parse, interpret, query and store all this information, embedded in another application or, if appropriate, in a seperate database or even on a remote server. More generally, Sesame provides application developers a toolbox that contains all the necessary tools for building applications with RDF. Commercial support for Sesame is available from Aduna Software.

Sesame also has a large ecosystem of addons and related toolsets. The following are the main links to these.

- Jess is a rule engine and scripting environment written entirely in Sun's Java language by Ernest Friedman-Hill at Sandia National Laboratories in Livermore, CA. Using Jess, you can build Java software that has the capacity to "reason" using knowledge you supply in the form of declarative rules. Jess is small, light, and one of the fastest rule engines available. Its powerful scripting language gives you access to all of Java's APIs. Jess includes a full-featured development environment based on the award-winning Eclipse platform.

A Jess Plugin for Protege is available, integrating Jess development with your ontology.

-

- ARQ, which is a query engine for Jena. ARQ supports multiple query languages (SPARQL, RDQL, and ARQ, the engine's own language), and besides Jena it can be used with general purpose engines and remote access engines. ARQ can also rewrite queries to SQL.

- Joseki, an HTTP server-based system that support SPARQL queries. Joseki features a WebAPI for the remote query and update of RDF models, including both a client component and an RDF server. The Joseki server can run embedded in an application, as a standalone program, or as a web application inside a suitable application server (such as Tomcat). It provides the operations of query and update on models it hosts.

Jena is a Java framework for building Semantic Web applications. It provides a programmatic environment for RDF, RDFS and OWL, SPARQL and includes a rule-based inference engine. Jena is open source and grown out of work with the HP Labs Semantic Web Programme. Important tools related to the Jena framework include:

-

- RDF/XML parser and writer

- OWL/XML parser and writer

- OWL Functional Syntax parser and writer

- Turtle parser and writer

- KRSS parser

- OBO Flat file format parser

- Support for integration with reasoners such as Pellet and FaCT++

The OWL API is an open-source Java interface and implementation for OWL, focused towards OWL 2 which encompasses OWL-Lite, OWL-DL and some elements of OWL-Full. The OWL API was used to build Protege 4.0 and was developed by Co-Ode, the company that works with Stanford University on the Protege project. It encompasses tool for the following tasks:

- Powl is a web-based platform for building applications designed to support collaborative building and managing of ontologies. It supports many of the features of mature tools like Protege, but for web applications that can be used for team development of ontologies. Powl is an open source project that uses PHP and various RDBMS systems on the back-end. Ontowiki is an example of a collaborative application built using Powl.

- The University of Victoria's Computer Human Interaction & Software Engineering Lab (CHISEL) has developed this visualization tool based on their own Shrimp software. Jamabalaya was developed as a plug-in created for Protégé tool, and uses Shrimp to visualize and query ontologies and knowledge bases the user has created. The University of Georgia (referenced in the next section) built their OntoVista tool using Jambalaya.

- The University of Georgia, as described in the next section of Semantic Applications, has built a large number of interesting semantic software. OntoVista is a particularly useful ontology visualization, navigation, and query tool based on Jambalaya. OntoVista is adaptable to the needs of different domains, especially in the life sciences. The tool provides a semantically enhanced graph display that gives users a more intuitive way of interpreting nodes and their relationships. Additionally, OntoVista provides comfortable interfaces for searching, semantic edge filtering and quick-browsing of ontologies.

- SWRL is intended to be the rule language of the Semantic Web and is based on OWL. It allows users to write rules to reason about OWL instances and to infer new knowledge about those instances.

- Pellet is an open source, OWL DL reasoner in Java that is developed, and commercially supported, by Clark & Parsia LLC. Pellet provides standard and cutting-edge reasoning services. It also incorporates various optimization techniques described in the DL literature and contains several novel optimizations for nominals, conjunctive query answering, and incremental reasoning.

Pronto is an extension of Pellet that enables probabilistic knowledge representation and reasoning in OWL ontologies. Pronto is distributed as a Java library equipped with a command line tool for demonstrating its basic capabilities. It is currently in development stage—more robust and mature than a mere prototype, but less mature than a production-level system like Pellet.

Pronto offers core OWL reasoning services for knowledge bases containing uncertain knowledge; that is, it processes statements like “Bird is a subclass-of Flying Object with probability greater than 90%” or “Tweety is-a Flying Object with probability less than 5%”. The use cases for Pronto include ontology and data alignment, as well as reasoning about uncertain domain knowledge generally; for example, risk factors associated with medical conditions like breast cancer.

-

- This is an online demo of the Validator.

This online tool, developed as part of the WonderWeb Project, attempts to validate an ontology against the different "species" of OWL. Any constructs found which relate to a particular species will be reported. In addition, if requested, the validator will return a description of the classes, properties and individuals in the ontology in terms of the OWL Abstract Syntax.

- Seamark Navigator is part of the commercial Information Access Platform from Siderean. Navigator is the relational navigation server component,which discovers and indexes content, pre-calculates relationships and suggests paths for data exploration. Its primary architectural components include a metadata aggregator, a scalable RDF store, and a relational navigation engine, all within an industry-standard Web services interface.

-

- This online demo takes a URL as input and returns a set of metadata extracted from the page, which developers can use to help develop taxonomies for their content.

The Calais Web Service automatically creates rich semantic metadata for the content you submit – in well under a second. Using natural language processing, machine learning and other methods, Calais analyzes your document and finds the entities within it. Calais goes beyond classic entity identification and returns facts and events hidden within your text as well.

-

- A small online demo shows sample text and entities and actions extracted from it.

Cortex Competitiva employs collectively both state-of-the-art text mining technologies and consolidated techniques in data mining. The main modules of the platform are Information Collection, Information Organization and Collaboration, and Information Use Analysis.

- IdentiFinder Text Suite, a product of BBN Technologies, lets users quickly sift through documents, web pages, and email to discover relevant information. It helps solve the classic problems of text mining: First, how to identify significant documents and then, how to locate the most important information within them.

- DL-Learner is a tool from AKSW for learning concepts in Description Logics (DLs) from user-provided examples. Equivalently, it can be used to learn classes in OWL ontologies from selected objects. The goal of DL-Learner is to construc knowledge about existing data sets. With DL-Learner, users provide positive and negative examples from a knowledge base for a not yet defined concept. The goal of DL-Learner is to derive a concept definition so that when the definition is added to the background knowledge all positive examples follow and none of the negative examples follow. See also the Wikipedia entry for ILP (Inductive Logic Programming). What DL-Learner considers is the the ILP problem applied to Descriptions Logics / OWL.

- GRDDL is a mechanism for Gleaning Resource Descriptions from Dialects of Languages. It is a technique for obtaining RDF data from XML documents and in particular XHTML pages. GRDDL provides an inexpensive set of mechanisms for bootstrapping RDF content from XML and XHTML. GRDDL does this by shifting the burden of formulating RDF away from the author to transformation algorithms written specifically for XML dialects such as XHTML. A repository of transformations is available.

- The Simile project has developed a large number of "RDFizers," which convert various file formats into RDF. This page also contains links to the many RDFizers developed by other organizations to handle even more document types.

Database Tools

Query Languages and Tools SPARQL Query Language for RDF- SPARQL is a w3c specification for querying RDF repositories. It can be used to express queries for native RDF files or for RDF generated from stored ontologies via middleware. he results of SPARQL queries can be results sets or RDF graphs.

- Owlgres is an open source, scalable reasoner for OWL2. Owlgres combines Description Logic reasoning with the data management and performance properties of an RDBMS. Owlgres is intended to be deployed with the open source PostgreSQL database server. Owlgres’s primary service is conjunctive query answering, using SPARQL-DL.

- D2RQ is a declarative language to describe mappings between relational database schema and OWL/RDF ontologies. The D2RQ platform uses these mapping to enables applications to access RDF views on a non-RDF database through the Jena and Sesame APIs, as well as over the Web via the SPARQL Protocol and as Linked Data.

- OntoSynt provides automatic support for extracting from a relational database schema its conceptual view. That is, it extracts semantics "hidden" in the relational sources by wrapping them by means of an ontology. The approach is specifically tailored for semantic information access, enabling queries over an ontology to be answered by using the data residing in its relational sources. Its web interface accepts an XML representation of an RDBMS schema, which can be generated using a tool like SQL Fairy.

- Relational.OWL is an open source application that automatically extracts the semantics of virtually any relational database and transforms this information automatically into RDF/OWL ontologies that can be processed by Semantic Web applications.

- SQL Fairy is a group of Perl modules that manipulate structured data definitions (mostly database schemas) in interesting ways, such as converting among different dialects of CREATE syntax (e.g., MySQL-to-Oracle), visualizations of schemas, automatic code generation, converting non-RDBMS files to SQL schemas (xSV text files, Excel spreadsheets), serializing parsed schemas (e.g., via XML), creating documentation (e.g., HTML), and more.

Application Servers

OpenLink Virtuoso Universal Server- Virtuoso, developed by OpenLink Software, is a complex product that appears to be a total solution for hosting Semantic Web applications, among other uses. In the company's words, from a recent release: "Virtuoso enables end users, systems architects, systems integrators, and developers to interact with data at the conceptual as opposed to the traditional logical level. Data about customers, suppliers, invoices, and orders, stored in existing ODBC- or JDBC-accessible database systems such as Oracle, Informix, Ingres, SQL Server, Sybase, Progress, and MySQL, can be presented in RDF form for use in Semantic Web applications."

- Virtuoso is also available in an Open Source Edition, a very active project that includes a large number of modules for use with various content management systems. The main difference between the open source and commercial editions of Virtuoso is the Virtual Database Engine, which essentially enables an application to incorporate multiple data servers in its queries.

Also available as open source from OpenLink is its OpenLink Ajax Toolkit (OAT), which comes with a wide range of user interface and data widgets, as well as complete applications for building data queries, designing databases, and designing web forms. The OpenLink Data Explorer is one of these standalone OAT applications. Widgets that are part of OAT include:

- Charts

- Tables

- Pivot Tables

- Tree controls

- Docks

- Sidebars

- Timelines

- RDF Visualizer

- Edit-in-place

- Buttons and Sliders

- Windows

- Tag clouds

- Mashups (e.g., data with Google Maps)

- Data modeling

- OpenLink also provides OpenLink Data Spaces (ODS), which run on the Virtuoso server, either the commercial or open-source editions. ODS enables developers to create a presence in the Semantic Web via Data Spaces derived from Weblogs, Wikis, Feed Aggregators, Photo Galleries, Shared Bookmarks, Discussion Forums and more. Data Spaces thus provide a foundation for the creation, processing and dissemination of knowledge for the emerging Semantic Web. ODS is pre-installed as part of the demonstration database bundled with the Virtuoso Open-Source Edition. Existing ODS modules include:

- Blogs

- Wikis

- Briefcase (file-sharing)

- Feed Manager

- Calendar

- Bookmark Manager

- Community (small-group spaces)

- The Cyc Knowledge Server is a very large, multi-contextual knowledge base and inference engine developed by Cycorp. The Cyc technology includes the following components:

- Cycorp also offers an open-source version of Cyc called OpenCyc. OpenCyc contains the full set of (non-proprietary) Cyc terms. The portal for the OpenCyc project, where developers can download the software and learn about ongoing projects and documentation is OpenCyc.org.

- Intelligent Topic Manager (ITM) is a commercial semantic software platform that enables a wide range of applications in enterprise information systems. ITM is designed to help organizations leverage, organize and model content and knowledge, to manage business reference models and taxonomies, to categorize and classify content, and to empower search. The platform consists of the following components and functionalities:

- Terminology, Thesaurus, Taxonomy, Metadata dictionary

- e-Catalog

- Knowledge management software

- Semantic Widgets

- Ontology Management

- Semantic indexing of text, video, image and sound content

- Automated updating of a knowledge base/ontology

- Reasoning and Inference

- Management of Publication Taxonomies for faceted search

- Generating Publication indexes and tables

- Topic Maps

- Oracle Spacial 11g is an open, scalable RDF management platform. Based on a graph data model, RDF triples are persisted, indexed and queried, similar to other object-relational data types. Application developers can use the Oracle server to design and develop a wide range of semantic-enhanced business applications.

- Asio Parliament, released as open source, implements a high-performance storage engine that is compatible with the RDF and OWL standards. However, it is not a complete data management system. Parliament is typically paired with a query processor, such as Sesame or Jena, to implement a complete data management solution that incorporates SPARQL standards. In addition, Parliament includes a high-performance SWRL-compliant rule engine, which serves as an efficient inference engine. An inference engine examines a directed graph of data and adds data to it based on a set of inference rules. This enables Parliament to fill in gaps in the data automatically and transparently, inferring additional facts and relationships in the data to enrich query results.

- Asio Cartographer is a graphical ontology mapper based on SWRL. It utilizes the core functionality of BBN's Snoggle open-source mapping tool to assist in aligning OWL ontologies. It lets users visualize ontologies and then draw mappings between them on an intuitive graphical canvas. Cartographer then transforms those maps into SWRL/RDF or SWRL/XML for use in a knowledge base.

- Asio Scout provides semantic bridges to relational databases and web services that let an organization keep their existing systems in place for as long as necessary to, for example, support ongoing operations. Scout's semantic bridges act like any passive data consumer, but unlike other counterparts, their functionality— in concert with Asio Semantic Query Distribution's high-level perspective—enables consolidated knowledge discovery that wasn't previously conceivable. Scout can be used for web portals, standalone desktop applications, or web-enabled applications.

Semantic Application Demos

Browsers and Search Portals- Disco - Hyperdata Browser is a simple browser for navigating the Semantic Web as an unbound set of data sources. The browser renders in HTML all information that it can find on the Semantic Web about a specific resource. This resource description contains hyperlinks that allow you to navigate between resources. While you move from one resource to another, the browser dynamically retrieves information by dereferencing HTTP URIs and by following rdfs:seeAlso links.

- Here is an online demo of Disco's presentation of DBPedia's Semantic Web database on the concept "Sociobiology."

- Umbel Subject Concepts Explorer is a lightweight ontology structure for relating Web content and data to a standard set of subject concepts. Its purpose is to provide a fixed set of reference points in a global knowledge space. These subject concepts have defined relationships between them, and can act as binding or attachment points for any Web content or data.

- Here is an online demo of Umbel's presentation of the concept "Field of Study."

- Openlink Data Explorer is one product developed from the open-source version of the Virtuoso Universal Server product. This is the platform used by the DBPedia project, including the demos on the DBPedia page. The demo below shows the XHTML view option of a Data Viewer ontology query.

- Here is an online demo of the OpenLink Data Explorer presentation of the concept "speed of light."

- Zitgist DataViewer lets users browse linked data on the web, starting from an RDF or OWL ontology URL.

- Here is an online demo of the Zitgist viewer browsing an ontology on the concept of "music genre."

- The Sindice Semantic Web Index monitors, harvests existing web data published as RDF and Microformats and makes them available under a coherent umbrella of functionalities and services. Its index of data is presented as a search portal much like Google. Sindice is created at DERI, the world’s largest institute for Semantic Web research. It is based on DERI’s unique cluster technology which indexes and operates over terascale semantic data sets (trillions of statements) while also providing very high query throughputs per cluster size. Leveraging unique cluster technologies, Sindice performs sophisticated reasoning which dramatically enhances data reusability, search precision, and recall. It obtains data by focused crawling methods which detects and focuses on metadata rich internet sources.

- Here is an online demo of the Sindice search engine.

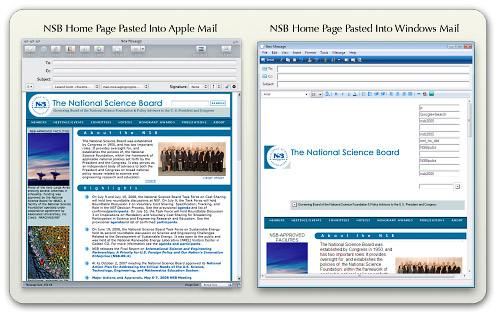

- The RKB Explorer is an application built using awards data from the National Science Foundation (NSF). It has used this data to build ontologies around NSF grants, and users can search and browse the data through the Explorer. All URIs on this domain are resolvable, and search results deliver HTML or RDF, depending on the content. The browse interface provides viewing and navigating using RDF triples, and the query interface provides access using SPARQL. I discovered this useful application through a search on "NSF funding" using Sindice.

- Here is an online demo for searching NSF Awards using RKB Explorer.

- Marbles Linked Data Browser is a server-side application that formats Semantic Web content for XHTML clients using Fresnel lenses and formats. Colored dots are used to correlate the origin of displayed data with a list of data sources, hence the name. Marbles provides display and database capabilities for DBpedia Mobile.

- Here is an online demo of the Marbles browser viewer displaying linked data for the National Science Foundation.

- The Cyc Foundation Concept Browser lets users search and browse the content of the OpenCyc knowledge base.

- Brownsauce is a Semantic Web browser that lets users browse RDF files on the web. It runs as a local Java client and has a built-in Jetty web server. Brownsauce uses the Jena Semantic Web framework.

- DBpedia is a community effort to extract structured information from Wikipedia and to make this information available on the Web. DBpedia allows you to ask sophisticated queries against Wikipedia and to link other datasets on the Web to Wikipedia data. DBpedia is one of the projects developed/sponsored by AKSW. A wide variety of articles and publications about DBpedia have been published (see the Resources section of this report).

- jSpace is a WebStart java application that demonstrates how one might search and query a given ontological database. There are several example database available to download for use with jSpace. jSpace's development was apparently inspired by mSpace. (mSpace was an innovative, but now defunct, project that attempted to merge the power of Google with the powerful interface of iTunes. Although the mSpace demo of a classical music explorer is not accessible now, it's well worth checking out the video demos of it.)

- Owlsight is an innovative web application that uses the Google Web Toolkit and the Est JavaScript library to let users navigate OWL ontologies, browsing the relationships between classes, properties, and instances. Owlsight uses the Pellet ontoloty reasoner.

- OpenCyc for the Semantic Web is both a project and an OWL ontology browser. Using this tool, users can access the entire OpenCyc content as downloadable OWL ontologies as well as via Semantic Web endpoints (i.e., permanent URIs). These URIs return RDF representations of each Cyc concept as well as a human-readable version when accessed via a Web Browser.

- Here is an online demo of the the OpenCyc Semantic Web result for "National Science Foundation."

- The KiWi wiki project proposes a new approach to knowledge management that combines the wiki philosophy with the intelligence and methods of the Semantic Web. (KiWi stands for "Knowledge in a Wiki.")

- DeepaMehta is a software platform for knowledge management. Knowledge is represented in a semantic network and is handled collaboratively. The DeepaMehta user interface is completely based on Mind Maps / Concept Maps. Instead of handling information through applications, windows and files, with DeepaMehta the user handles all kind of information directly and individually.

- Here is an online demo of the the DeepaMehta interface on the subject of the computer user interface.

- Semantic MediaWiki and SMW+are extensions to the MediaWiki platform, described elsewhere in this report.

- MIT's Simile project has been extremely creative and productive in applying concepts of linked data, RDF, and the Semantic Web generally to demonstration applications, all available as open source. (Simile is an acronym for "Semantic Interoperability of Metadata and Information in unLike Environments".) Some of its projects are included elsewhere in this report, but here is a list of some others relevant to the Semantic Web:

- Longwell, a server application that applies concepts of faceted browsing with visualizing RDF stores.

- PiggyBank is a Firefox add-on that enables users to develop "mashups" of web data by using "screen scrapers." The software also allows users to tag information found and embed RDF into their content.

- RDFizers, described elsewhere in this report.

- Referee, a server application that creates browsable RDF files from web server logs.

- Welkin, an RDF visualizer built as a client-side java application. (Note: I couldn't get it to run on my Mac, even though MIT makes a Mac OS X disk image available.)

- Fresnel, a vocabulary for displaying RDF.

- Banach, a collection of operators that work on RDF graphs to infer, extend, emerge or otherwise transform a graph into another.

- Data Collecton, a project that aims to develop a collection of RDF data sets that are generally useful for the metadata research and tools community.

- DERI (Digital Enterprise Research Institute) International is the collection of bi-lateral agreements between like minded institutes working on the Semantic Web and Web Science. Its mission is to exploit semantics for people, organizations, and systems to collaborate and interoperate on a global scale. DERI conducts and funds research in Semantic Web technologies, conducts projects that have led to numerous prototype applications, and develops ontologies. The following are a few interesting links from DERI's Irish branch in Galway:

- Research Clusters covering such topics as eLearning, Semantic Reality, Semantic Web Services, Industrial and Scientific Applications of Semantic Web Services, and Social Software. Each cluster has its own website and projects.

- Research Projects, a lengthy list of ongoing projects.

- Tools, a lengthy list of software tools available for download, typically from SourceForge.

- University of Georgia's Large Scale Distributed Information Systems has a wide array of semantic applications available. The online repository has descriptions, downloads, and online demos. The applications cover such functions as visualization, ontology queries, ontology browsing, web services, and more.

- 10 Semantic Apps To Watch From the ReadWriteWeb site, this is an intriguing list of new semantic-web-related applications that are now available out there. The article gives first explains what they mean by a "Semantic Application," and then briefly describes each application's innovative use of this new technology. The ten applications listed are: It's also interesting to read the comments at the end of this article, many of which are from readers pointing out other semantic applications they have discovered.

Semantic Website Enhancements

Semantic Web Crawling: A Sitemap Extension- This specification allows website managers to provide an RDF sitemap which would be visible to users browsing the Semantic Web.

- Triplify is an open-source, light-weight add-on to web applications that can read the content of the application's relational database(s) and expose their inherent semantics. According to the Triplify website, for a typical Web application a configuration for Triplify can be created in less than an hour. Triplify is based on the definition of relational database queries for a specific Web application in order to retrieve valuable information and to convert the results of these queries into RDF, JSON and Linked Data. A "triplified" web application can then provide its data to other applications on the web, enabling use of its information in "mashups."

The Triplify project already has configurations for a variety of widely used content management systems, such as OpenConf, WordPress, Drupal, Joomla!, osCommerce, and phpBB. (The page that has links to these configurations also has a great list of other Semantic Web resources.) Triplify is one of the applications developed by AKSW. (I plan to download Triplify and integrate it in an instance of WordPress on my home computer.)

- Microformats are orthogonally related to the Semantic Web through their use of RDF-like attributes in CSS Class elements. Designed for humans first and machines second, microformats are a set of simple, open data formats built upon existing and widely adopted standards. They are highly correlated with semantic XHTML, sometimes referred to a "real world semantics", or "lossless XHTML." Microformats are designed to enable more/better structured blogging and web publishing. The Microformats site provides an array of code and tools for use in producing markup in microformats.

- RDFa in HTML is a proposed W3C specification that enables markup of RDF-like syntax into XHTML content. RDFa in XHTML provides a set of XHTML attributes to augment human-readable contenta with machine-readable hints. It enables the expression of simple and more complex datasets using RDFa, and in particular turns the existing human-visible text and links into machine-readable data without repeating content. The goals and approach of this specification are similar to that of Microformats, but it extends XHTML by use of and RDF-like syntax rather than using CSS classes.

- Exhibit is a three-tier web application framework written in Javascript, which you can use with various kinds of data files, including JSON and RDF, to produce knowledge-enhancing "mashups" like Google Maps. Exhibit creates interactive user interfaces displaying record data sets on maps, timelines, scatter plots, interactive tables, etc. Exhibit is one of the projects in knowledge management developed by MIT, partly with NSF funding.

- There are several online demos of Exhibit presentations starting here.

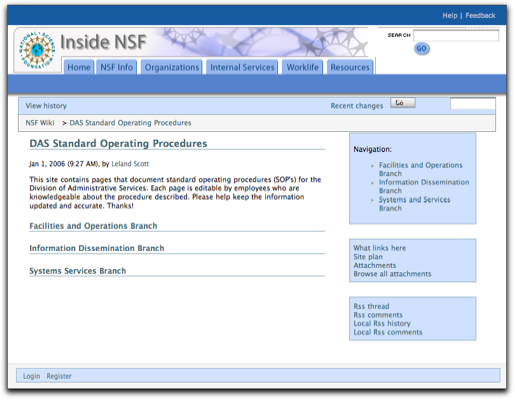

- Semantic MediaWiki (SMW) is a free extension of MediaWiki – the wiki system powering Wikipedia – that helps to search, organise, tag, browse, evaluate, and share the wiki's content. While traditional wikis contain only texts that computers can neither understand nor evaluate, SMW adds semantic annotations that bring the power of the Semantic Web to the wiki.

- SMW+ is Ontoprise's production version of the open source Semantic MediaWiki + Halo Extension software, which was originally developed as part of the 2003-04 Halo project for scientific information discovery. SMW+ makes the process of annotating wiki content much easier by adding a variety of useful interface tools, and it also helps writers research information by using the wiki's built-in ontology browser. SMW+ is designed to enable and enhance knowledge collaboration in organizations. It's available as a free download from Sourceforge, or as a reasonably priced bundled version for Windows. Ontoprise also offers service contracts for the product. The impressive detailed list of features on the Ontoprise website gives a good overview of SMW+ capabilities. These include:

- Semantic Toolbar: Lets users create, inspect and alter semantic annotations in the wiki text without knowing the annotation syntax.

- Advanced Annotation Mode: In this mode, wiki pages are displayed in the same way as they are displayed in the standard view mode. However, users can easily add annotations by simply highlighting the word or passage they want to annotate.

- Ontology Browser: Allows easy navigation through the wiki's ontology without the need to access individual articles. It helps the user to understand the ontology and to keep an overview about it.

- Question Formulation Interface: Normally, making queries against the semantic wiki involve knowing and using a complex syntax. The Question Formulation Interface provides a graphical interface that lets inexperienced users easily compose their own queries.

- Auto completion: This tool greatly simplifies users' ability to generate annotations. With auto completion activated, users don't have to care about correct spelling of an article’s or property's name, because the tool extracts possible completions from the semantic context. For example, it checks what attribute values are possible for a particular attribute and show only these to the user. This tool is used in the wiki text editor, the semantic tool bar, the query interface and the combined search.

- ARC is an API for LAMP-based (Linux-Apache-MySQL-PHP) websites. Its goal is to reach out to the larger Web developer community, to enable the combination of efforts like microformats with the utility of selected RDF solutions such as agile data storage, run-time model changes, standardized query interfaces, and mashup chaining. ARC tries to keep things simple and flexible. All features are backed by practical use cases. One of the underlying premises of ARC is that RDF is a productivity booster that can make website implementation much faster if it's used pragmatically.

ARC includes the following capabilities:

- Parsers for RDF/XML, Turtle, SPARQL + SPOG, Legacy XML, HTML "tag soup," RSS 2.0, and others.

- Serializers for N-Triples, RDF/JSON, RDF/XML, Turtle, SPOG dumps.

- RDF Storage using MySQL with support for SPARQL queries

- SemHTML RDF extractors for Duplin Core, eRDF, microformats, OpenID, RDFa

- Use of remote stores, allowing the website to query remote SPARQL endpoints as if they were local stores (results are returned as native PHP arrays)

- SPARQLScript, a SPARQL-based scripting language combined with output templating

- Light-weight inferencing

- dooit - Simple to-do lists

- irs - (i)nterlinking of (r)esources with (s)emantics

- Life Science Identifier (LSID) Tester

- OpenVocab - Community-maintained RDF vocabulary workspace

- paggr - smart data + personalized portals

- Scregg, an "Online Semantic Community Framework"

- SIOC Importer for WordPress

- SMOB - Semantic Microblogging

- SPARQL Endpoint for Library of Congress Subject Headings (2,441,494 triples)

- SPARQLBot - a tiny software agent that simplifies access to linked data and the general Semantic Web

- SparqlPress - WordPress enhanced through use of linked data. Spoogle is a demo site for SparqlPress.

- Talis Applications:

- Trice - A Semantic Web framework (still in development).

- Marmoset, one of several Semantic Web tools from the OpenCalais project, is a simple yet powerful tool that makes it easy for publishers to generate and embed metadata in their content in preparation for Yahoo! Search's new open developer platform, SearchMonkey, as well as other metacrawlers and semantic applications. Marmoset uses the OpenCalais web service, which can provide search engine crawlers with rich semantic data to consider when they index a site's pages. Yahoo!'s search engine can analyze this semantic data, provided in Microformats, and other search engines are likely to follow. As a result, users accessing a Marmoset-enhanced website through search engines will get better targeted results.

Other Resources

Ontology Libraries One of the best features of ontologies is their design for reuse. It's not clear to me what happens when you encounter a dozen ontologies for "person" or "job", etc., in the ontology libraries on the web, but it's certainly useful that you can search for existing ontologies and bring the objects you want to model into your own ontology. There are a few ontologies for commonly used objects that are nearly defacto standards now:- Friend-of-a-Friend (FOAF) for People and Organizations

- Dublin Core for Publications

- Simple Knowledge Organization System (SKOS) for thesauri

- OWL-Time for time intervals

- SIOC (Semantically-Interlinked Online Communities) is commonly used in conjunction with the FOAF vocabulary for expressing personal profile and social networking information.

- Tags, Places, and other specific topics, a repository of ontologies developed by Richard Newman.

- Protege Ontology Library This library is part of the Protege Wiki.

- Simile Ontologies This library includes those developed by MIT as part of the Simile project as well as a list of others that have been used by the project.

- Swoogle Swoogle is a research project being carried out by the ebiquity research group in the Computer Science and Electrical Engineering Department at the University of Maryland

- Google Google can restrict its search to files of type "owl", as this sample search shows.

- OntoSelect Ontology Library This library has an ontology search system with several unique and innovative features, including use of Wikipedia topics as the basis for one type of search.

- BioPortal BioPortal is a sophisticated web application for accessing and sharing biomedical ontologies. It features several advanced search and visualization tools, as well as tools for mapping concepts between different ontologies.

- SchemaWeb This is a comprehensive directory of RDF schemas which, in addition to typical browse-and-search interfaces, also provides an extensive set of web services to be used by software agents for processing RDF data.

- Watson This link points to Watson's terrific web interface, which is one of the best for searching out ontologies that match your topics of interest. Watson also has a Protege plugin, but I haven't been able to make it work. The plugin, when working, would let a developer search and add classes to their ontology directly from within Protege.

- TONES Ontology Repository This repository is primarily designed to be a central location for ontologies that might be of use to ontology tools developers for testing purposes.

- Ping the Semantic Web Developed as a free web service by Zitgist, a company "incubated" by OpenLink, PingtheSemanticWeb (PTSW) is an archive of recently created/updated RDF documents on the web. If one of those documents is created or updated, its author can notify PTSW that the document has been created or updated by pinging the service with the URL of the document. PTSW is used by crawlers or other types of software agents to know when and where the latest updated RDF documents can be found. This dynamically updated library displays the 25 most recently updated ontologies, in real time. Using PTSW's data store, you can retrieve data on all RDF files by namespace or by class, with the option to download the files.

- W3C Semantic Web Activity This portal can be thought of as the Semantic Web's "Home Page." It brings together a vast amount of primary source documentation of the Semantic Web's languages and other standard specifications, including OWL, RDF, RDFa in XHTML, and SPARQL. In addition, this portal gathers all the major ongoing projects involving the Semantic Web and the groups conducting them. The page also lists a large number of publications and presentations on Semantic Web topics.

- Rich Tags This paper describes a proposal/project for developing a system that uses semantic tags for enhancing the searchability of web pages. (The proposal sounds similar to the W3C specification for RDFa in XHTML.)

- Projects That Use Protege This page on the "old" Protege wiki has an extensive list of applications built with Protege.

- Building A Semantic Website This article is a little old (2001), but has a good overview of the steps and components of building a web application using RDF ontologies.

- Ontology Extraction from Text Based on Linguistic Analysis This paper describes the concepts and technical approaches behind the OntoLT Protege plug-in.

- Extracting Ontologies from Relational Databases A detailed, highly technical paper describing the approach adopted and the actual extraction algorithm used by the tool OntoSynt.

- TONES TONES is a European Union research project into the design and use of Thinking ONtologiES. Begun in 2005, it is scheduled to complete its work in 2008. The TONES website has links to all of the outputs of the project, including software tools and research papers. This PDF contains a 2006 presentation overview of the TONES project.

- RapidOWL This methodology for developing OWL ontologies is based on the idea of iterative refinement, annotation and structuring of a knowledge base. A central paradigm for the RapidOWL methodology is the concentration on smallest possible information chunks. The collaborative aspect comes into play, when those information chunks can be selectively added, removed, annotated with comments or ratings. Design rationales for the RapidOWL methodology are to be light-weight, easy-to-implement, and support of spatially distributed and highly collaborative scenarios. This methodology is implemented in the OntoWiki software project.

- Agile Knowledge Engineering and Semantic Web (AKSW) AKSW has been very prolific in providing the Semantic Web community with eye-opening research projects, which have led to several complete applications, including: Powl and OntoWiki, DBPedia, Triplify, and R2D2. Their work has also spawned numerous other public interfaces to the Semantic Web. In addition, the AKSW website publishes a large number of presentations and research papers describing the work leading to their various Semantic Web applications.

- DBPedia information This useful page collects blog posts about DBpedia, publications about the project and related websites.

- Linked Data Comes of Age This very useful article clearly explains what is meant by linked data based on RDF and how it fits into the overarching vision of the Semantic Web.

- Zitgist's Papers and Reports This is a useful list of resources on subjects relevant to Semantic Web research. The Zitgist Lab site also has a good page of documents on Best Practices for RDF.

- RDF Schemas This site has a clear explanation of the various "vocabularies" used to develop ontologies: RDF, RDFS, OWL, and Dublic Core. The site also has a terrific list of resources for programmers.

- Nodalities Magazine Sponsored by Talis, this free, bimonthly online magazine (released in PDF format) tries to bridge the divide between those building the Semantic Web and those interested in applying it to their business requirements. The magazine is supported by the Nodalities blog, podcasts, and Semantic Web development work.

- DERI Papers and Reports This site contains a large collection of research papers and technical reports produced by DERI International.

BBN is a technology company with a broad range of expertise, services, and products—including support for Semantic Web application development. As an indication of the impressive expertise of this company, BBN was the prime contractor for DARPA (Defense Advanced Research Projects Agency) in development of DAML (DARPA Agent Markup Language), which then led to their development of OWL. BBN also provides the Asio Tool Suite for third-party development and the open source Snoogle and Parliament tools.

BBN is a technology company with a broad range of expertise, services, and products—including support for Semantic Web application development. As an indication of the impressive expertise of this company, BBN was the prime contractor for DARPA (Defense Advanced Research Projects Agency) in development of DAML (DARPA Agent Markup Language), which then led to their development of OWL. BBN also provides the Asio Tool Suite for third-party development and the open source Snoogle and Parliament tools. Cycorp is a leading provider of semantic technologies that bring intelligence and common sense reasoning to a wide variety of software applications. The Cyc software combines ontologies and knowledge bases with a powerful reasoning engine and natural language interfaces to enable the development of novel knowledge-intensive applications.

Cycorp is a leading provider of semantic technologies that bring intelligence and common sense reasoning to a wide variety of software applications. The Cyc software combines ontologies and knowledge bases with a powerful reasoning engine and natural language interfaces to enable the development of novel knowledge-intensive applications. Clark & Parsia is a small R&D firm—specializing in Semantic Web and advanced systems—based in Washington, DC. They have expertise in a range of semantic-web technologies, including OWL, RDF, reasoning at scale, and ontology development. They offer commercial support for Pellet, a best-of-breed Open Source OWL DL reasoner in Java, and related systems.

Clark & Parsia is a small R&D firm—specializing in Semantic Web and advanced systems—based in Washington, DC. They have expertise in a range of semantic-web technologies, including OWL, RDF, reasoning at scale, and ontology development. They offer commercial support for Pellet, a best-of-breed Open Source OWL DL reasoner in Java, and related systems. This company helps companies (medium/large with 1,000 to 10,000 employees) migrate to semantically-based SOAs (Service Oriented Architectures).

This company helps companies (medium/large with 1,000 to 10,000 employees) migrate to semantically-based SOAs (Service Oriented Architectures). Zitgist has a number of interesting products for viewing and querying the Semantic Web, as well as offering services for ontology development, content conversion, and web services. They also provide several open-source products for both consumer and corporate use in furthering use of the Semantic Web.

Zitgist has a number of interesting products for viewing and querying the Semantic Web, as well as offering services for ontology development, content conversion, and web services. They also provide several open-source products for both consumer and corporate use in furthering use of the Semantic Web. The Semantic Web Company (SWC), based in Vienna, Austria, provides companies, institutions and organizations with professional services related to the Semantic Web, semantic technologies and Social Software. They provide services in consulting, education, and project management, among others.

The Semantic Web Company (SWC), based in Vienna, Austria, provides companies, institutions and organizations with professional services related to the Semantic Web, semantic technologies and Social Software. They provide services in consulting, education, and project management, among others. Talis has developed its own application development platform—the Talis Platform—and also builds Semantic Web applications for other organizations. To date, Talis' applications have been geared to meeting the needs of libraries and academic institutions.

Talis has developed its own application development platform—the Talis Platform—and also builds Semantic Web applications for other organizations. To date, Talis' applications have been geared to meeting the needs of libraries and academic institutions. Semsol offers a wide range of Semantic Web-related services, from consulting and data modeling to interface design and production. Semsol is a pioneer in bringing Semantic Web technologies to widely deployed server and database environments. Semsol is the company behind development of the open-source tool ARC, as well as for several of the applications built on top of ARC, including Trice, SPARQLBot, and paggr (referenced earlier).

Semsol offers a wide range of Semantic Web-related services, from consulting and data modeling to interface design and production. Semsol is a pioneer in bringing Semantic Web technologies to widely deployed server and database environments. Semsol is the company behind development of the open-source tool ARC, as well as for several of the applications built on top of ARC, including Trice, SPARQLBot, and paggr (referenced earlier). Cortex's software platform and consulting business is based on their Competitiva system. Cortex’s technology proposes to mine unstructured data on the Web, using Competitiva's intelligent system to automatically convert pages and documents to a semantic format (i.e. RDF). Cortex has an R&D team working to bridge the Semantic Web gap by automatically enriching text with semantic content for themselves and their customers.

Cortex's software platform and consulting business is based on their Competitiva system. Cortex’s technology proposes to mine unstructured data on the Web, using Competitiva's intelligent system to automatically convert pages and documents to a semantic format (i.e. RDF). Cortex has an R&D team working to bridge the Semantic Web gap by automatically enriching text with semantic content for themselves and their customers.A Close-Up Look At Today’s Web Browsers: Comparing Firefox, IE 7, Opera, Safari

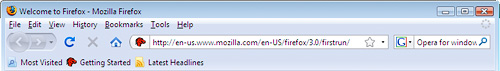

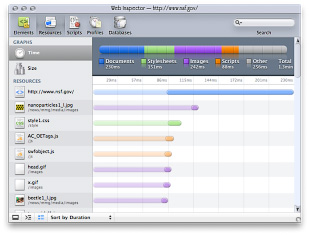

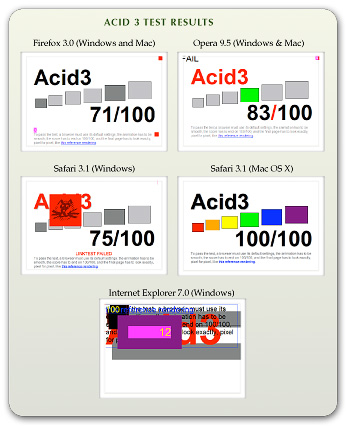

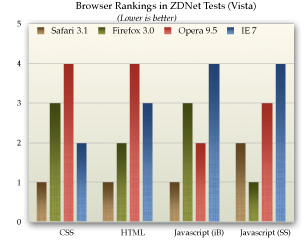

My, we've come a long way in browser choices since 2005, haven't we? It's been a very heady time for programmers who dabble in the lingua franca of the World Wide Web: HTML, JavaScript, Cascading Style Sheets, the Document Object Model, and XML/XSLT. Together, this collection of scripting tools, boosted by a  technique with the letter-soup name "XMLHttpRequest," became known as "Ajax." Ajax spawned an avalanche of cool, useful, and powerful new web applications that are today beginning to successfully challenge traditional computer-desktop software like Microsoft Word and Excel. As good as vanguard products like Goodle's Maps, Gmail, Documents, and Calendar apps are, one only has to peek at what Apple has accomplished with its new MobileMe web apps to see how much like desktop applications web software can be in 2008.

technique with the letter-soup name "XMLHttpRequest," became known as "Ajax." Ajax spawned an avalanche of cool, useful, and powerful new web applications that are today beginning to successfully challenge traditional computer-desktop software like Microsoft Word and Excel. As good as vanguard products like Goodle's Maps, Gmail, Documents, and Calendar apps are, one only has to peek at what Apple has accomplished with its new MobileMe web apps to see how much like desktop applications web software can be in 2008.

That this overwhelming trend toward advanced, desktop-like applications has happened at all is the result of the efforts of determined developers from the Mozilla project, which rose from the ashes of Netscape's demise to create the small, light, powerful and popular Firefox browser. The activity of the Mozilla group spurred innovation from other browser makers and eventually forced a trend towards open standards that made the emergence of Ajax possible.

This article starts with a brief history of web browsers and then jumps into a look at the feature set of the four primary "modern" web browsers in 2008. The comparison of browser features begins by listing the core features that all these browsers have in common. The bulk of the article lists in detail "special features" of each browser and each browser's good and bad points, as they relate to the core browser characteristics. Following that, I present some recent data on the comparative performance of these browsers. The article concludes with recommendations I would make to organizations interested in making the switch from IE6 in 2008.

- Web Browsers in 2008: A Brief History

- Comparison of Browser Features

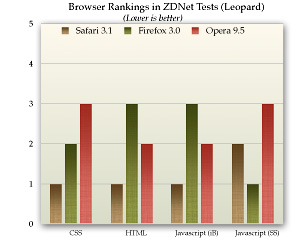

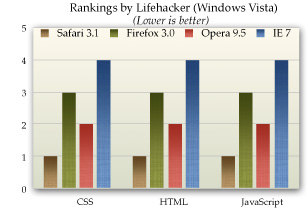

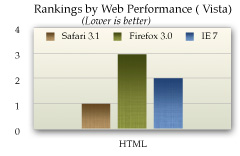

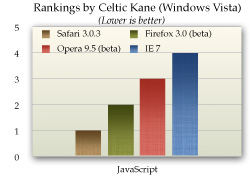

- Browser Performance

- Conclusions

- Bookmarks for Further Reading

Web Browsers in 2008: A Brief History

In 2008, web designers and programmers can finally see the light at the end of the very long, dark tunnel that began with the first browser wars of the late 1990's. That war introduced "browser incompatibility," as both Netscape and Microsoft struggled to establish their own, incompatible standards. At that time, the standards approved by the World Wide Web Consortium (w3c) were somewhat skimpy and behind the times in terms of what those companies wanted to do.

It wasn't long before the w3c approved a standard for JavaScript, which Netscape had introduced a couple of years before, as well as a standard for CSS Level 2.0, which was to be a major advance in the "designability" of web pages. CSS 2.0 promised an end to the ubiquitous use of "font" tags, invisible graphics, and HTML tables on which designers relied to convert their ideas, typically developed using visual design tools such as Photoshop, to HTML. However, those new standards were too late, since Microsoft was making aggressive use of its monopoly on corporate desktops to promote Internet Explorer at the expense of Netscape. That effort, of course, eventually succeeded, and Microsoft was found guilty of antitrust violations (though never effectively punished for them).

Even though IE eventually garnered a monopoly in corporate browser usage equal to Windows' monopoly as an operating system, web programmers and designers who developed content for the general public were still obliged to support two completely different and incompatible "standards," neither of which was truly standards-compliant. The dual nature of  the browser market caused programmers to shy away from JavaScript and CSS entirely, since it was too much of an effort to deploy them in a way that would render well on both browsers. Unfortunately, this meant that the state-of-the-web art remained stuck in 1998 until just the last couple of years, when Mozilla's Firefox and Apple's Safari browsers began slowly whittling away at IE's dominance.

the browser market caused programmers to shy away from JavaScript and CSS entirely, since it was too much of an effort to deploy them in a way that would render well on both browsers. Unfortunately, this meant that the state-of-the-web art remained stuck in 1998 until just the last couple of years, when Mozilla's Firefox and Apple's Safari browsers began slowly whittling away at IE's dominance.

Like earlier versions of Internet Explorer, IE 6, introduced in 2001 as part of Windows XP, maintained its own set of proprietary standards that largely ignored the leadership of standards bodies like the w3c. At that time, they could  afford to do so since there was virtually no competition left. However, by 2004, Firefox had emerged from the open-source Mozilla group (which evolved from Netscape's decision to open-source the Netscape browser code) as a very interesting, lightweight browser that prided itself on close adherence to w3c standards.

afford to do so since there was virtually no competition left. However, by 2004, Firefox had emerged from the open-source Mozilla group (which evolved from Netscape's decision to open-source the Netscape browser code) as a very interesting, lightweight browser that prided itself on close adherence to w3c standards.

Meanwhile, in Europe, the Opera browser was moving in the same direction as Firefox--toward full implementation of w3c standards for JavaScript and CSS 2. In 2005, Opera became a totally free browser choice, where previously it had used advertising as a source of revenue for non-paying customers. At this point, Opera became a more significant player, which, despite its very small market share outside of Europe, continues today.

In 2003, Apple introduced Safari 1.0 for Mac OS X, and shortly thereafter Microsoft ceased support of Internet Explorer for the Mac platform. Safari was based on the open-source code used for the Linux browser Konqueror, and in 2005 Apple released the core Safari code--its "rendering engine"--as open source through establishment of the WebKit project. Since then, the WebKit team has made rapid progress in adopting w3c standards and bringing its code base up to the state-of-the-art as defined by those standards. Safari is the dominant browser on Mac OS X, with Firefox a strong second, and the increasing market share of Mac OS X in the last couple of years has resulted in corresponding increases in the market share of Safari. Now that Safari is available for Windows and is being used for Apple's iPhone platform, Safari's market share will likely continue to rise in coming years.

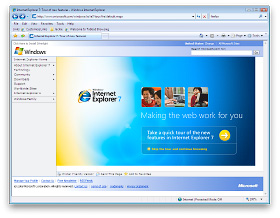

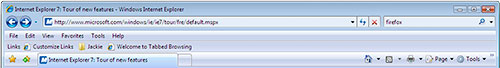

In 2007, Microsoft finally responded to the growing competition from Firefox and Safari, and released Internet Explorer 7.0 in concert with its release of Windows Vista. Although IE 7 maintains a significant lag behind the other browsers in adopting open standards, it has made important improvements over IE 6. And the early beta releases of IE 8, accompanied by assurances from Microsoft's technical engineers, suggest that IE 8 will make even more significant improvements in becoming standards-compliant.

It is the convergence of these trends that is causing that glow at the end of the tunnel at last. With the demise of IE 6 (whose market share is rapidly collapsing), the final major remnant of the ugly browser war of 1998-2000 will be a thing of the past. Since Microsoft appears serious about getting IE 8 to market in less than the 6 years that elapsed between IE 6 and IE 7, web developers can be hopeful that their use of JavaScript, CSS, and HTML will no longer be a struggle to find the right "hack" to accommodate all the browser choices out there. At that moment, the web will finally be ready to evolve into the platform that Java aspired to, but never managed to become: A platform on which developers can build applications that are agnostic both of the user's client and of their operating system.

That outcome is a win-win for everyone… except, perhaps, Microsoft, since it will bring to fruition the open Internet it has tried so long to keep at bay.

The next section of this report will look in detail at the feature set of the four primary "modern" web browsers in 2008, by market share. Following that, the report presents some recent data on comparative performance for these browsers, and finally I conclude with a brief set of recommendations. The browsers have all been tested primarily on a Windows Vista Ultimate platform, and the recommendations are geared to organizations that have been relying on IE 6 or IE 7 as their default browser. Safari, Firefox, and Opera have also been tested on a Mac OS X 10.5 "Leopard" system.

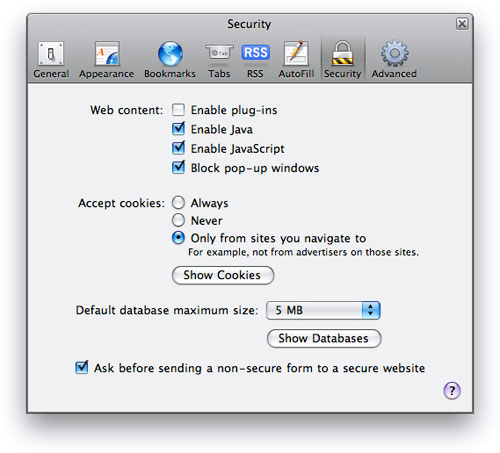

Comparison of Browser Features

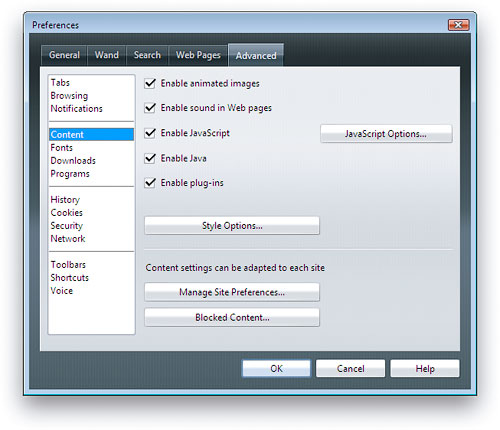

This section looks in detail at the many features that both bind and distinguish the four browsers included in this study:

The first part of this section pulls together all of the features these four browsers have in common. This set of features can be considered a baseline that defines what a "modern" browser can do. Naturally, some of the browsers are more "modern" than others, so they go far beyond these features in distinguishing themselves from the others.

For each browser reviewed, the write-up begins with a list of the browser's "Special Features"--that is, its features that are unique or especially distinguishing. Following that, each browser's features are listed in comparison with each other in a list of "Good Points" and "Bad Points." Each item in these lists is categorized using the set of "Baseline Features" below.

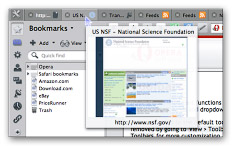

Baseline Features

- Ability to define page colors and page fonts.

- Ability to set personal style sheets.

- Ability to easily resize fonts.

- Ability to prevent automatic loading of page images.

- Ability to set bookmarks for web pages visited

- Ability to organize bookmarks into folders.

- Ability to arrange bookmarks in a special toolbar. Toolbar can contain folders of bookmarks as well as individual links.

- Ability to import and export bookmarks as HTML.

- All of the tested browsers support use of a proxy server and use of an automated configuration file on the network for applying browser settings.

- Ability to define proxy and SSL (secure socket layer) settings, as well as supported HTTP protocols.

- Ability to identify errors (JavaScript at a minimum) when loading a web page.

- No common features.

- Ability to view browser history by date and to sort history items.

- Ability to search stored history items.

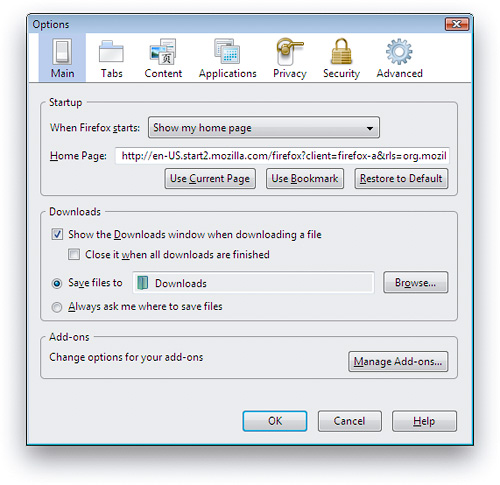

- Ability to set home page and define basics about what browser shows when opened.

- Ability to view page HTML source.

- Ability to define basic settings for cookies.

- Ability to define how long history items are stored, or whether they're stored at all.

- Ability to subscribe to and view RSS feeds.

- Pages that contain RSS feed information are identified with special symbol or option.

- Web search field located in the browser toolbar.

- Web search options include some basic customization.