News Posts In Category

The “Bloated” Federal Bureaucracy:

A Lie That’s Either Malicious Ignorance Or Deliberate Malice

One of the truly bewildering traits of human beings is their ability—and even carefree willingness—to ignore facts that conflict with their current worldview. I touched on this topic in an earlier article, and find it manifested in numerous ways in this most viciously anti-rational political climate.

This article looks at data for a timely topic that's a favorite target for fact distortion: Has the U.S. Federal Government workforce grown too large, or not?

The "Tea Party" politicians, in particular, appear to be masters at the art of selling people willful ignorance, perhaps partly because they themselves drink from that cup religiously. Among the false ideas they consider common knowledge is the idea that the Federal workforce needs to be cut—presumably because it, like the Government as a whole, has grown too big. While they're at it, they'd also like to make sure Federal employees don't have a benefits package better than members of their own congregation do.

Recently, a Republican from Texas, Rep. Kevin Brady, submitted a legislative proposal to cut the Federal workforce by 10 percent. According to a Washington Post article, Brady's reasoning goes like this:

There's not a business in America that's survived this recession without right-sizing its workforce, without having to become more productive with fewer workers. The federal government can't be the exception. We're going to have to find a way to serve our constituents and our taxpayers better and quicker and more accurately with fewer workers. I'm convinced we can do it and we don't have a choice.

Including its overall premise, Brady's short statement includes several fallacies, and on Mars we find it alarming to realize that this guy is chairman of the Joint Economic Committee and a senior member of the House Ways and Means Committee. Where I come from, those are pretty big britches! When someone with authority over such enormously important Government functions gets his facts wrong, one has to wonder whether he is deliberately lying for political reasons, or whether he's maliciously failing to determine the facts—instead shaping them to fit his policy goals.

Joint Economic Committee

The Joint Economic Committee is one of four standing joint committees of the U.S. Congress. The committee was established as a part of the Employment Act of 1946, which deemed the committee responsible for reporting the current economic condition of the United States and for making suggestions for improvement to the economy.

On Mars, such behavior is almost unheard of. When I first revealed it, my fellow Martians had trouble believing that sentient beings could behave this way. And even if someone were to deliberately distort reality, surely Earth's legal systems would be constructed to punish the act.

Apparently, however, this behavior is not only tolerated, it's rewarded by the mere awareness that it's tolerated. After all, if a lie—or deliberate ignorance—by someone in authority isn't challenged, it clearly achieves its purpose. And achieving one's purpose obviously counts as a success. (On Mars, we believe that this is one of the perverse lessons Americans learned from President Richard Nixon's downfall: If you're going to lie, cheat, embezzle, or otherwise commit illegal acts, be sure you aren't caught doing so.)

So, what fallacies does Mr. Brady disseminate in his statement? Here are two obvious ones:

- "There's not a business in America that's survived this recession without right-sizing its workforce." How can this be true? Clearly, as has always been the case, the economic downturn produces not only losers, but winners as well. Yes, the losers will have had to lay off workers, hence the rise in unemployment. But companies in growth sectors will not have done so, and they may even have continued to expand. In this downturn, for example, employment in the oil mining industry increased from 143,000 to 159,000 from 2007 to 2009. A better example is the computer services sector, where employers added 400,000 jobs.

- "The federal government can't be the exception." Someone like Brady who is in charge of National economic policy undoubtedly understands that reducing employment in the Federal sector is never a good thing during a period of slow economic growth. Even economists who aren't sold onKeynesian economics realize that the Federal Government should remain a stable economic player during times like this. Stating otherwise must be a deliberate deception.

Keynesian Economics.

A macroeconomic theory based on the ideas of 20th century British economist John Maynard Keynes. This theory argues that private sector investment decisions periodically lead to inefficiencies that cause economic output to fall and unemployment to rise. It therefore advocates active policy responses by the public sector, including an expansion of the money supply by the central bank and increased spending by the government, in order to stabilize output over this business cycle.

That leaves the notion that the U.S. Government must "right-size" its workforce in order to "become more productive with fewer workers." First of all, what does "right-sizing" a workforce mean? If you read Wikipedia's article on the subject, you come away believing that "right-sizing" is merely a euphemism for "layoffs" or "downsizing."

Some dictionaries, on the other hand, suggest there's a nuance to the term that differentiates it from "layoffs." Webster's, for example, defines the term as follows:

To reduce (as a workforce) to an optimal size

"Right-sizing" (or "rightsizing") is a term first uttered on Earth in 1989, when it was really just jargon to justify the downsizing that became de rigeur during the waning years of the first Bush administration. One of the main reasons companies downsize is that their workforce has bulged after a major merger with or acquisition of another company. And as you may recall, starting in the 1980s corporations did a heckuva lot of merging and acquiring. For awhile, even "rollups" where all the rage on Wall Street.

Rollup.

A Rollup (also "Roll-up" or "Roll up") is a technique used by investors (commonly private equity firms) where multiple small companies in the same market are acquired and merged. The principal aim of a rollup is to reduce costs through economies of scale.

After a merger or major acquisition, it's pretty standard to eliminate inherited workers who do redundant tasks, or those who have a record of poor performance. Companies who downsize for any other reason do so because they're performing poorly, as measured by revenue and profits. In this case, companies downsize to reduce their production costs and make their products or services more competitive.

So, there are two big problems with even suggesting that the Federal Government engage in "right-sizing:"

- Governments are nonprofit institutions, and therefore notions such as competitiveness, profits, and product pricing are meaningless.

- Governments don't merge with or acquire other governments. Well, unless you're talking about conquests, which surely is a special case. Occasionally, governments do split up... for example, when a U.S. State secedes from the Union, or when a country declares its independence from another. In this latter case, of course, the split governments will find the need to "upsize" their workforce rather than downsizing them.

Ah, but what if you believe, as lawmakers such as Brady do, that the cost of the Federal workforce is a major reason why the Federal deficit is ballooning? Well, then I suppose the suggestion does make sense.

As it turns out—and here I'm finally getting to the crux of my argument—the Federal workforce has not been a contributor to the growth in Federal spending. If you're picking up an axe to cut the budget, hacking at the workforce is not only missing the target, but it will actually increase costs in the long run.

As it turns out—and here I'm finally getting to the crux of my argument—the Federal workforce has not been a contributor to the growth in Federal spending. If you're picking up an axe to cut the budget, hacking at the workforce is not only missing the target, but it will actually increase costs in the long run.

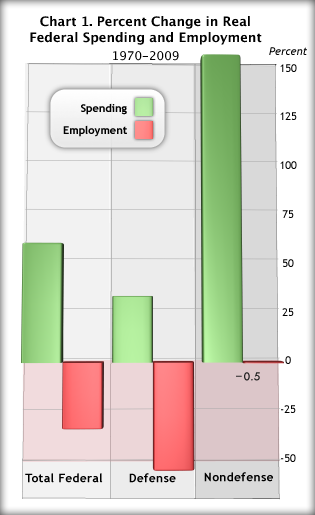

What evidence do I have to support such assertions? Consider the following facts for the 40-year period from 1970 to 2009, as illustrated in the accompanying charts:

- Real (adjusted for inflation) Federal consumption spending increased 56 percent, while total Federal employment fell about 30 percent. Most of the reduction in Federal employment came in the defense sector, but the number of nondefense employees stayed basically flat during this 40-year period while nondefense spending shot up 150% (Chart 1). (Note: The measure of spending shown in chart 1 includes only "current expenditures," which basically counts spending required "to keep the trains running"—that is, to carry out basic agency missions.)

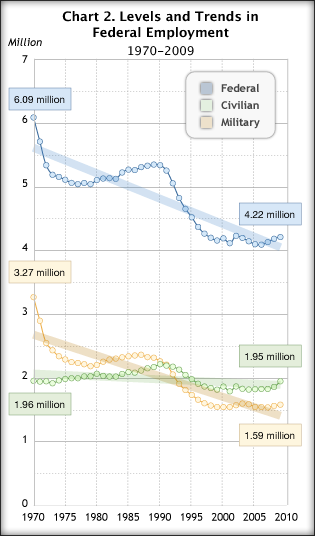

From 1970 to 2009, total Federal employment shrank from 6.1 million to 4.2 million—again, mostly in defense. The nondefense Federal workforce was 1.96 million in 1970, and 1.95 million in 2009 (Chart 2).

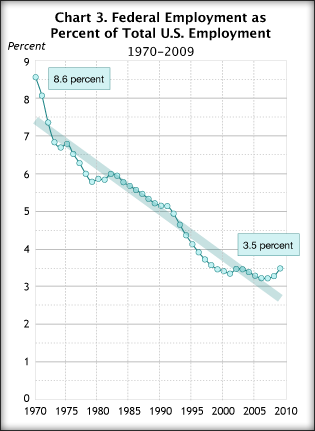

From 1970 to 2009, total Federal employment shrank from 6.1 million to 4.2 million—again, mostly in defense. The nondefense Federal workforce was 1.96 million in 1970, and 1.95 million in 2009 (Chart 2). During these 40 years, Federal employment as a percentage of total U.S. employment dropped from 8.6 percent to 3.5 percent (Chart 3).

During these 40 years, Federal employment as a percentage of total U.S. employment dropped from 8.6 percent to 3.5 percent (Chart 3).

These facts make it obvious that the Federal Government has been engaging in "right-sizing" for a very long time. How could Federal employees not be a great deal more efficient and productive today if their numbers haven't changed in the last 40 years, while their workload and output have doubled?

Despite continuous calls for less Federal "intrusion" into taxpayers' lives, taxpayers have simultaneously been demanding and expecting more and more of their National Government. As anyone who has been even marginally observant knows, Federal responsibilities have expanded greatly since 1970. Among its new and expanded assignments are:

- Occupational Safety and Health. The Occupational Safety and Health Administration was created in 1970 to "ensure that employers provide employees with an environment free from recognized hazards, such as exposure to toxic chemicals, excessive noise levels, mechanical dangers, heat or cold stress, or unsanitary conditions."

- Environmental Protection. The Environmental Protection Agency was also created in 1970 and charged with "protecting human health and the environment, by writing and enforcing regulations based on laws passed by Congress."

- National Security. The agencies responsible for ensuring the safety of U.S. citizens have increased employment substantially during this period, especially since the September 11, 2001, attacks by radical Islamic terrorists. The attacks resulted in a reorganization of security functions from various agencies into a new agency, the Office of Homeland Security. The number of Federal security personnel at U.S. airports has also increased, of course.

- Natural Resource Management. In 1973, Congress passed the Endangered Species Act, which requires Federal agencies to ensure that their activities "do not jeopardize the existence of any endangered or threatened species of plant or animal or result in the destruction or deterioration of critical habitat of such species."

- National Park System. Numerous Acts and Executive Orders have expanded the responsibilities of the National Park Service since 1970, including the General Authorities Act of 1970, the National Parks and Recreation Act of 1978, and the Alaska National Interest Lands Conservation Act of 1980.

- Drug Abuse. The Comprehensive Drug Abuse Prevention and Control Act of 1970 expanded and optimized the Federal Government's ability to control use of illegal drugs. Among other components, the legislation included the Controlled Substances Act, which established drug "schedules," into which various substances would be classified and for which misuse penalties would be defined.

- Many other functions, including Immigration Control (yes, we have been spending more money and hired more people for this), Education, Technology Infrastructure, and Information Dissemination.

Regarding Information Dissemination, consider the huge cost and workload involved in building all the great Federal websites we now have—including the many channels to obtaining customized information from Federal databases never before available.

For example, the charts and data shown in this article come from the Bureau of Economic Analysis (BEA), the Commerce Department agency responsible for collecting and analyzing statistics on the U.S. economy. BEA is the organization that produces estimates of Gross Domestic Product, personal income, and much more. Their data is now available through an easy-to-use, customizable web interface that generates data in a variety of formats, including tab-delimited, which can be imported into spreadsheet software.

Yes, the Government does much less printing now than it used to, but as one with first-hand knowledge of Federal publishing, let me assure you it costs much more now to publish on the web than printing ever did. For one thing, many agencies were encouraged to—and did—charge fees for printed publications. Obviously, they collect nothing from use of their websites. For another, nearly all Federal printed documents were required by law to use only black ink, or black and one other color. A tiny fraction used the four-color process that's standard for commercial printing.

However, Federal web publishing has been under no such contraints, and so agencies have spent as freely as they thought necessary to make splashy, flashy, and sexy websites that could have been—and often are—designed by a Madison Avenue ad firm. Such sites look nice, but besides being expensive they too often make usability a secondary consideration to appearance. Where once a small agency might spend $500,000 a year on printing, it's now common for it to spend $1 or $2 million on their websites, while still printing some material. (Note: BEA remains a big exception to the norm. Their website eschews expensive graphics and other flashy flourishes, and is mostly easy-to-navigate textual content.)

OK, so it's undeniable that Federal employment has shrunk in the last 40 years, while spending has grown. Doesn't that suggest that Federal employees are much more productive than they were 40 years ago?

Given the data in Chart 1, it's clear that productivity in the Federal sector has risen considerably. However, something must be missing, because it's nearly impossible for an organization to boost output by 50% while cutting its workforce by 30%. In fact, if you lay these data beside analogous ones for the private sector,  it appears that the Feds have been using some secret productivity weapon that they should now share with the private sector, so that it can downsize as the Feds have done. (Oops... no, that would cause a huge recession, actually.)

it appears that the Feds have been using some secret productivity weapon that they should now share with the private sector, so that it can downsize as the Feds have done. (Oops... no, that would cause a huge recession, actually.)

Since 1970, output of private industry has shot up 200%, but this was accompanied by a 70% increase in employment (Chart 4). This means that the gain in private output required 70 percent more workers over this period. If you apply that relationship to the public sector, Federal employment should have increased 15-20 percent to support its 50% growth in output over these 40 years.

So how did they do it? How could the Federal sector manage to increase output by 50% while actually reducing employment? The truth is, they couldn't have done, despite what the data show. For even though the data are correct for what they do measure, they are missing a big component of the puzzle, as you'll see.

The Missing Employment Data

Since Jimmy Carter came to office in 1976, every President except for George H.W. Bush has called for either cuts in or freezes on Federal hiring. This explains why Federal employment has remained flat for 40 years... it has been continuously downsized.1

The drops in defense spending and employment reflect both the end of the Draft and the end of the Cold War.

Given this history, today's calls for cuts in Federal employment are either dishonest and politically motivated, or they are misguided and made by ignorant politicians who have no business being in charge of the Nation's business.

The ugly truth is that for every Federal worker who hasn't been hired since 1970, one or two private-sector employees has been. For most of these 40 years, both the Executive and Legislative branches of the U.S. Government, whether led by Republicans or Democrats, have bought into the notion that "contracting out" (or "outsourcing") Federal jobs was a good way of stretching precious Federal dollars.

Contracting Out

In the context of the public sector, contracting out refers to the act of transferring work previously handled by public employees to employees employed by private contractors. Over time, through workforce attrition, this has the effect of replacing public jobs with private ones.

But this is simply not the case, for two simple reasons, which I plan to take up in a future article on Federal contracting:

- Inefficiency. Outsourcing to private companies is often much more expensive than retaining work inhouse. Briefly, this is the result of:

- Additional Overhead. Most large contracts are subcontracted, and even subcontracts are subcontracted. Each layer adds to the overhead cost of every dollar spent.

- Inflexibility. Getting rid of bad Federal contractors can be as difficult as getting rid of a bad Federal employee.

- Incompetence or dishonesty. Scrutiny of the background and expertise of companies hired by the government is much less exacting than that of potential employees. Too often, companies overstate their qualifications for a particular type of work, overstate costs, or both. Even when the private enterprise is at fault, the government agency loses time as work must be redone, and typically must shell out additional funds for the privilege.

- Lack of continuity. When a company is newly hired to assume an existing task, it's far too easy for them to claim that the outgoing contractor had been "doing things wrong." Without continuity, Federal managers can face unmeasured duplication of costs merely because the new contractor has a different way of doing things. Sometimes a change is warranted, but too often it is not. This kind of waste can also occur when Federal managers change, but that happens far less frequently.

- Conflict of interest. Private contractors are motivated by profit rather than by public service, and therefore should never be in charge of making policy or spending decisions that affect taxpayers. This is a clear conflict of interest situation, where the private company's goal is to make as much money as possible, and the Government's goal is to serve the public as best it can within its limited means.

Even if you don't see it the way we do on Mars, you will surely find it strange—and disturbing—that the Federal Government has absolutely no idea how many employees it has in the private sector.

If you walk through any Federal office today, you won't be able to tell which employees are contractors and which are on the Federal payroll. For all appearances, everyone there is a Federal employee. Yet they're not, and nobody keeps tabs on the ones who aren't, except to make sure they have the appropriate network accounts, desks, computers, and security badges. The Labor Department, which is responsible for collecting the Nation's employment data, has never included this information as part of its surveys.

Among other management consequences of this irresponsible lack of data is that it's impossible to know whether the Federal workforce is "right-sized" or not. It's also impossible to measure relative employment costs, or to compare productivity for the two groups.

And why do we not have these necessary data on private contractors?

First, the Paperwork Reduction Act of 1980—one of a series of misguided deregulation moves in the 1980s designed to get the Federal Government "off the backs" of private companies—made it extremely difficult for Federal agencies to add new questions to their existing surveys. And second, the lack of knowledge has been a mutually beneficial "wink" among cash-strapped Federal managers, cash-hungry private companies, and dishonest/ignorant legislators who want to claim they're cutting costs by keeping a lid on Federal employment.

Only in the last few years has the superiority of outsourcing public jobs been openly questioned, and that's been spurred mainly by concerns about the propriety and cost of contracting by the State and Defense Departments to support the War in Iraq. Yet all through the George W. Bush years, Federal agencies were under extreme pressure to "privatize" or "contract-out" any functions that weren't "inherently governmental in nature."

Inherently Governmental

More-or-less officially, an “inherently governmental function” is one that, as a matter of law and policy, must be performed by federal government employees and cannot be contracted out because it is “intimately related to the public interest.” This definition is quoted from a fairly comprehensive recent report (PDF, 822kb) on the term and its implications, published by the Congressional Research Service in February 2010.

Now, I know what "privatizing" means, ugly word though it may be. But no one—including those pushing hardest for it—can explain what an "inherently governmental" function is. If they were honest, such advocates would admit that any public function that becomes the object of lust by some industry group's lobbyists could not possibly be "inherently governmental," and therefore could be a candidate for privatizing or outsourcing.

To hear these people talk, the only "inherently governmental" jobs are those that make and administer budgets and contracts. That means no jobs for

- Clerks

- Scientists

- Engineers

- Computer specialists

- Designers

- Webmasters

- Economists

- Statisticians

- Audio/Video specialists

- Public affairs specialists

- Writers

- Editors

- Security specialists

- Meeting planners

- Travel planners

- Programmers

- Systems designers

- Accountants

- Budget analysts

- Etc.

This leaves jobs only for

- Lawyers

- Administrators

- Managers

- Budget officers

- Contracting officers

- Personnel officers

Myth of the Coddled Federal Worker

One final piece of the puzzle behind the recent calls for Federal downsizing, workforce attrition, and worker pay caps is the myth that Federal workers cost more than their private-sector counterparts, because of their great benefits. Legislators like Brady love to stick this one in their speeches because it's a guaranteed applause line, especially during great recessions.

Trouble is, it's not true.

I'm going to sidestep the whole debate about whether Federal salaries or higher or lower than comparable jobs in the private sector, because it's too complicated for a few paragraphs and perhaps even for an entire book. There are numerous problems with this analysis, including the difficulty of finding consistent data that tracks all the relevant variables —including worker age, education, experience, location, and job descriptions.

Under President George H.W. Bush, Congress passed legislation that granted Federal workers additional pay under a system of "locality adjustments." President Clinton more or less moth-balled the system, and then set one up that was a pale shadow of the original. Here's a link for more information on the topic.

Since the Civil Service Retirement System (CSRS) was mothballed in 1986, all new Federal workers have been in the Federal Employee Retirement System (FERS). FERS does offer a small pension, but it's nothing like the one CSRS retirees enjoy. In addition, FERS workers pay a much higher portion of their salaries for that pension than CSRS workers did.

Instead, a FERS retirement is heavily dependent on the Federal Thrift Plan, which is nothing more than a 401K program for Federal employees. (Federal workers don't have 401K plans.)

Federal employees have health care, sick leave, vacation leave, and other benefits that are comparable to those in any large U.S. company. I'll never forget moving from a Federal job at BEA to Citibank back in 1996, and finding that Citibank's benefits were superior to those I'd had in the government. Not only that, my pay was almost double, and I didn't have any onerous supervisory responsibilities. Citibank's pension system wasn't as generous as that from CSRS, but it was comparable to that of FERS.

Are Federal benefits better than those of your typical small company? Yes, very likely they are. And, given the vast difference between a Federal agency of 100,000 and your typical small company of 50, the difference is appropriate.

In any case, very few Federal contracts are awarded to your typical small company. At least, not directly. Any small companies that share in contract spending get work only through some "prime" contractor, not directly by some Federal manager.

CSRS was abolished not only to reduce the pay of Federal retirees, but also to add the Federal workforce to the Social Security pool. Under CSRS, Feds neither paid Social Security nor received its benefits on retirement. Under FERS, they do both in the same way that private sector workers do.

Another reason why Federal employees still have a decent package of benefits is that they are represented by a Labor Union, the National Federation of Federal Employees. If workers in U.S. companies get desperate enough, perhaps they'll recall that having a Union on your side is a good thing in the fight for decent pay and benefits. That's a lesson that's been lost over the years, especially since President Reagan started kicking Unions in the butt back in 1982.

However, just because workers don't have the pay, benefits, and pension they should have doesn't make it OK for them to demand cuts for those who do.

And politicians like Mr. Brady should know better.

Big Man in a Tiny Bubble Pops In To D.C.

He arrived from the tiny town of Butler, Pennsylviania, as part of the new freshman class of Angry Republican Congressmen. After all the feting and touring that greeted him in Washington, Mike Kelly was asked who had impressed him the most.

"Nobody," he said.

To be impressed by "nobody" must mean this guy is hugely impressed with himself, one would surmise. Well, yes and no:

"I hope I don't sound arrogant about this, but at 62 years old, I've pretty much seen what I need to see.”

Today's article in the Washington Post doesn't explore what exactly Mr. Kelly has seen in his 62 years, but from his attitude and statements, I would venture to guess it isn't much.

You see, Mike Kelly came to Washington because he is angry that the Federal Government "intruded" on the running of his General Motors car dealership, where he'd spent 56 years of creative energy. (I guess that means he'd been working on the business since he was 6. Just kidding.)

And exactly how had it intruded? Why, it was making him sell Chevrolets instead of Cadillacs.

And exactly why was it ruining his business this way? Well, you see, Obama had (personally) taken over General Motors and was (personally) requiring dealerships to restructure as part of an effort to save the company.

"This is America. You can't come in and take my business away from me. . . . Every penny we have is wrapped up in here. I've got 110 people that rely on me every two weeks to be paid. . . . And you call me up and in five minutes try to wipe out 56 years of a business?”

This is a reasonable attitude if you believe that tiny, parochial self-interest should be the motivator of those elected to run a National Government. However, tiny attitudes from Big Men In Their Local Communities have no place in Congress. Indeed, those with tiny, uninformed beliefs who fail to see the big picture are precisely the ones inclined to take actions that will fail the interest of the public they're elected to serve.

They are also the most vulnerable to corruption, since if you believe that self-interest is the highest good, then you are likely to be impressed by visitors who flatter your ego and your opinions... and then offer to pay you huge sums to ensure your reelection or to sway your vote on an issue that serves your own interest.

A lot of Big Men in Tiny Bubbles like Mr. Kelly were frightened and outraged when the Obama administration offered to buy a 61% stake in General Motors in the summer of 2009. After all, wasn't this a "Government Takeover", or worse, a "Nationalization" of a private company?

If you were inclined to take a narrow view, it was. However, if you bothered to take the big view, it clearly was not.

Obama was a reluctant participant in the process of saving General Motors, and his sin was that he insisted that the taxpayers have some control over the process. Rather than just handing $50 billion to a company that had proven itself incapable of turning a profit and had driven itself into bankruptcy, he stipulated that outside ("Government") experts have a say in how that money was used. The restructuring that resulted is what caused Mr. Kelly such pain in his private bubble.

As an article in The Economist—a business journal with no reputation for supporting Government intrusion into the workings of Capitalism—ended up apologizing to Obama for sharing the view that his action was a mistake:

August 19, 2010. Americans expect much from their president, but they do not think he should run car companies. Fortunately, Barack Obama agrees. This week the American government moved closer to getting rid of its stake in General Motors (GM) when the recently ex-bankrupt firm filed to offer its shares once more to the public (see article).

Once a symbol of American prosperity, GM collapsed into the government’s arms last summer. Years of poor management and grabby unions had left it in wretched shape. Efforts to reform came too late. When the recession hit, demand for cars plummeted. GM was on the verge of running out of cash when Uncle Sam intervened, throwing the firm a lifeline of $50 billion in exchange for 61% of its shares.

Many people thought this bail-out (and a smaller one involving Chrysler, an even sicker firm) unwise. Governments have historically been lousy stewards of industry. Lovers of free markets (including The Economist) feared that Mr Obama might use GM as a political tool: perhaps favouring the unions who donate to Democrats or forcing the firm to build smaller, greener cars than consumers want to buy. The label “Government Motors” quickly stuck, evoking images of clunky committee-built cars that burned banknotes instead of petrol—all run by what Sarah Palin might call the socialist-in-chief.

Yet the doomsayers were wrong. Unlike, say, France’s President Nicolas Sarkozy, who used public funds to support Renault and Peugeot-Citroën on condition that they did not close factories in France, Mr Obama has been tough from the start. GM had to promise to slim down dramatically—cutting jobs, shuttering factories and shedding brands—to win its lifeline. The firm was forced to declare bankruptcy. Shareholders were wiped out. Top managers were swept aside. Unions did win some special favours: when Chrysler was divided among its creditors, for example, a union health fund did far better than secured bondholders whose claims should have been senior. Congress has put pressure on GM to build new models in America rather than Asia, and to keep open dealerships in certain electoral districts. But by and large Mr Obama has not used his stakes in GM and Chrysler for political ends. On the contrary, his goal has been to restore both firms to health and then get out as quickly as possible. GM is now profitable again and Chrysler, managed by Fiat, is making progress. Taxpayers might even turn a profit when GM is sold.

GM's payback to U.S. taxpayers has already begun, and as The Economist notes, the total repayment over time will likely exceed the original $50 billion investment.

Yet Mr. Kelly probably doesn't believe any of this. Why? Because he doesn't want to. It's not in his interest to do so. It's more convenient for him to believe it's all a lie.

After all, to change his mind would invalidate his reason for popping in to Washington. Given his arrogant attitude that he is the most impressive person in D.C., he is hardly the sort to question himself, let alone to burst the tiny bubble that brought him here.

Senate Exposes Gaping Hole in Conflict-of-Interest Law

Just days after I opened an exploration of the way humans view conflict of interest, and how their personal self-interest makes understanding the way this topic is approached in different contexts, the Washington Post publishes a front-page article that exposes the kind of conundrum I'm planning to look into.

The Senate, you see, has no laws restricting the investments its members can make into companies whose fortunes their votes may affect. In particular, they may freely invest in companies that are major players in specific industries overseen by Senate committees. In the Post article, the industry is defense, and the committee typically has "inside knowledge" into the defense systems that will be built, and which companies will benefit from their votes.

This seems strange enough, but as the Post article points out, the Congress has passed laws that prohibit such investments by those appointed to run the agencies — such as Defense — that will let the contracts to carry out the Senate's decisions. Not only that, but such laws have long been on the books to regulate investment behavior by rank-and-file Federal employees.

White House Freezes IT Projects To Revisit Wasteful IT Contracting

Government Going Apple?

EU Study Confirms The Positive Economic Impact of Open Source Software

EU study says OSS has better economics than proprietary software

Wow, I wonder if I can get my hands on this one… According to this ArsTechnica post, the European Union has released a study on the economic impact of open source software (OSS) and found it to be good. Naturally, Microsoft, Adobe, and others with business models tied to high-priced proprietary software will disagree. As ArsTechnica reports,

In a section titled “User benefits: interoperability, productivity, and cost savings,” the study’s authors (researchers from five European universities) make the claim that OSS is a less-expensive alternative to proprietary software…. “Our findings show that, in almost all the cases, a transition toward open source reports savings on the long-term costs of ownership of the software products,” says the study’s authors.

As one of the report’s recommendations, the EU is encouraged to change its policies that tend to favor proprietary software and instead do more to pave the way for OSS adoption. Unless you’re a stockholder in one of the software companies mentioned earlier, this is great news!

ArsTechnica also published this interesting chart:

Can We Resume The Antitrust Trial Against Microsoft Now, Please?

Protecting Windows: How PC Malware Became A Way of Life

Article Summary

This is a very long article that covers several different, but related, topics. If you are interested, but don’t have time to read the entire article, here’s a summary of the main themes, with links to the sections of text that cover them:

This is a very long article that covers several different, but related, topics. If you are interested, but don’t have time to read the entire article, here’s a summary of the main themes, with links to the sections of text that cover them:

- Required Security Awareness Classes Reinforce Windows Monopoly in Federal Agencies.

For the third straight year, I’ve been forced to take online “security awareness” training at my Federal agency that includes modules entirely irrelevant–and in fact, quite insulting–to Macintosh users (myself included). The online training requires the use of Internet Explorer, which doesn’t even exist for Mac OS X and in fact is the weakest possible browser to use from a security perspective. It also reinforces the myth that computer viruses, adware, and malicious email attachments are a problem for all users, when in fact they only are a concern to users of Microsoft Windows. In presenting best practices for improved security, the training says absolutely nothing about the inherent security advantages of switching to Mac OS X or Linux, even though this is an increasingly well known and non-controversial solution. This part of the article describes the online training class and the false assumptions behind it in detail. - IT Managers Are Spreading and Sustaining Myths About the Cause of the Malware Plague.

These myths serve to protect the status quo and their own jobs at the expense of users and corporate IT dollars. None of the following “well known” facts are true, and once you realize that malware is not inevitable–at the intensity Windows users have come to expect–you realize there actually are options that can attack the root cause of the problem.- Windows is the primary target of malware because it’s on 95% of the world’s desktops,

- Malware has worsened because there are so many more hackers now thanks to the Internet, and

- All the hackers attack Windows because it’s the biggest target.

This section of the article describes the history of the malware plague and its actual root causes.

- U.S. IT Management Practices Aren’t Designed for Today’s Fast-Moving Technology Environment.

This part of the article discusses why IT management failed to respond effectively to the disruptive plague of malware in this century, and then presents a long list of proposed “Best Practices” for today’s Information Technology organizations. The primary theme is that IT shops cover roughly two kinds of activity: (1) Operations, and (2) Development. Most IT shops are dominated by Operations managers, whose impulse is to preserve the status quo rather than investigate new technologies and alternatives to current practice. A major thrust of my proposed best practices is that the influence of operations managers in the strategic thinking of IT management needs to be minimized and carefully monitored. More emphasis needs to be accorded to the Development thinkers in the organization, who are likely to be more attuned to important new trends in IT and less resistant to and fearful of change, which is the essence of 21st century technology.

Ah, computer security training. Don’t you just love it? Doesn’t it make you feel secure to know that your alert IT department is on patrol against the evil malware that slinks in and takes the network down every now and then, giving you a free afternoon off? Look at all the resources those wise caretakers have activated to keep you safe!

- Virulent antivirus software, which wakes up and takes over your PC several times a day (always, it seems, just at the moment when you actually needed to type something important).

- Very expensive, enterprise-class desktop-management software that happily recommends to management when you need more RAM, when you’ve downloaded peer-to-peer software contrary to company rules, and when you replaced the antivirus software the company provides with a brand that’s a little easier on your CPU.

- Silent, deadly, expensive, and nosy mail server software that reads your mail and removes files with suspicious-looking extensions, or with suspicious-looking subject lines like “I Love You“, while letting creepy-looking email with subject lines like “You didnt answer deniable antecedent” or “in beef gunk” get through.

- Expensive new security personnel, who get to hire even more expensive security contractors, who go on intrusion-detection rampages once or twice a year, spend lots of money, gum up the network, and make recommendations for the company to spend even more money on security the next year.

- Field trips to Redmond, Washington, to hear what Microsoft has to say for itself, returning with expensive new licenses for Groove and SharePoint Portal Server (why both? why either?), and other security-related software.

- New daily meetings that let everyone involved in protecting the network sit and wring their hands while listening to news about the latest computing vulnerabilities that have been discovered.

- And let’s not forget security training! My favorite! By all means, we need to educate the staff on the proper “code of conduct” for handling company information technology gear. Later in the article, I’ll tell you all about the interesting things I learned this year, which earned me an anonymous certificate for passing a new security test. Yay!

In fact, this article started out as a simple expose on the somewhat insulting online training I just took. But one thought led to another, and soon I was ruminating on the Information Technology organization as a whole, and about the effectiveness and rationality of its response to the troublesome invasion of micro-cyberorganisms of the last 6 or 7 years.

Protecting the network

Who makes decisions about computer security for your organization? Chances are, it’s the same guys who set up your network and desktop computer to begin with. When the plague of computer viruses, worms, and other malware began in earnest, the first instinct of these security Tzars was understandable: Protect!

Protect the investment…

Protect the users…

Protect the network!

And the plague itself, which still ravages our computer systems… was this an event that our wise IT leaders had foreseen? Had they been warning employees about the danger of email, the sanctity of passwords, and the evil of internet downloads prior to the first big virus that struck? If your company’s IT staff is anything like mine, I seriously doubt it. Like everyone else, the IT folks in charge of our computing systems at the office only started paying attention after a high-profile disaster or two. Prior to that, it was business as usual for the IT operations types: “Ignore it until you can’t do so anymore.” A vulgar translation of this “code of conduct” is often used instead: “If it ain’t broke, don’t fix it.”

Unfortunately, the IT Powers-That-Be never moved beyond their initial defensive response. They never actually tried to investigate and treat the underlying cause of the plague. No, after they had finished setting up a shield around the perimeter, investing in enterprise antivirus and spam software, and other easy measures, it’s doubtful that your IT department ever stepped back to ask one simple question: How much of the plague has to do with our reliance on Microsoft Windows? Would we be better off by switching to another platform?

It’s doubtful that the question ever crossed their minds, but even if someone did raise it, someone else was ready with an easy put-down or three:

- It’s only because Windows is on 95% of the world’s desktops.

- It’s only because there are so many more hackers now.

- And all the hackers attack Windows because it’s the biggest target.

At about this time in the Computer Virus Wars, the rallying cry of the typical IT shop transitioned from “Protect the network… users… etc.” to simply:

Protect Windows!

Windows security myths

The “facts” about the root causes of the Virus Wars have been repeated so often in every forum where computer security is discussed—from the evening news to talk shows to internal memos and water-cooler chat—that most people quickly learned to simply shut the question out of their minds. There are so many things humans worry about in 2006, and so many things we wonder about, that the more answers we can actually find, the better. People nowadays cling to firm answers like lifelines, because there’s nothing worse than an unsolved mystery that could have a negative impact on you or your loved ones.

Only problem is, the computer security answers IT gave you are wrong. The rise of computer viruses, email worms, adware, spyware, and indeed the whole category now known as “malware” simply could not have happened without the Microsoft Windows monopoly of both PC’s and web browsing and the way the product’s corporate owners responded to the threat. In fact, the rise of the myth helped prolong the outbreak, and perhaps just made it worse, since it took Microsoft off the hook of responsibility… thus conveniently keeping the company’s consideration of the potentially expensive solutions at a very low priority.

Even though the IT managers who actually get to make decisions didn’t see this coming, it’s been several years now since some smart, brave (in at least one case, a job was lost) people raised a red flag about the vulnerability of our Microsoft “monoculture” to attack. They warned us that reliance on Microsoft Windows, and the impulse to consolidate an entire organization onto one company’s operating system, was a recipe for disaster. Because no one actually raised this warning beforehand, the folks in the mid-to-late 1990’s who were busily wiping out all competing desktops in their native habitat can perhaps be forgiven for doing so. However, IT leaders today who still don’t recognize the danger—and in fact actively resist or ignore the suggestion by others in their organization to change that policy—are being recklessly negligent with their organization’s IT infrastructure. It’s now generally accepted by knowledgeable, objective security experts that the Microsoft Windows “monoculture” is a key component that let the virus outbreak get so bad and stay around for so long. They strongly encourage organizations to loosen the reins on their “Windows only” desktop policy and allow a healthy “heteroculture” to thrive in their organization’s computer desktop environment.

Even though the IT managers who actually get to make decisions didn’t see this coming, it’s been several years now since some smart, brave (in at least one case, a job was lost) people raised a red flag about the vulnerability of our Microsoft “monoculture” to attack. They warned us that reliance on Microsoft Windows, and the impulse to consolidate an entire organization onto one company’s operating system, was a recipe for disaster. Because no one actually raised this warning beforehand, the folks in the mid-to-late 1990’s who were busily wiping out all competing desktops in their native habitat can perhaps be forgiven for doing so. However, IT leaders today who still don’t recognize the danger—and in fact actively resist or ignore the suggestion by others in their organization to change that policy—are being recklessly negligent with their organization’s IT infrastructure. It’s now generally accepted by knowledgeable, objective security experts that the Microsoft Windows “monoculture” is a key component that let the virus outbreak get so bad and stay around for so long. They strongly encourage organizations to loosen the reins on their “Windows only” desktop policy and allow a healthy “heteroculture” to thrive in their organization’s computer desktop environment.

Full disclosure: I was one of the folks who warned their IT organization about the Windows security problem and urged a change of course several years ago. From a white paper delivered to my CIO in November 2002, this was one of my arguments for allowing Mac OS X into my organization as a supported platform:

Promoting a heterogeneous computing environment is in NNN’s best interest from a security perspective. Mactinoshes continue to be far more resistant to computer viruses than Windows systems. The latest studies show that this is not just a matter of Windows being the dominant desktop operating system, but rather it relates to basic security flaws in Windows.

About a year later, when Cyberinsecurity was released, I provided a copy to my company’s Security Officer. But sadly, both efforts fell on deaf ears, and continue to do so.

1999: The plague begins

The first significant computer virus—probably the first one you and I noticed—was actually a worm. The “Melissa Worm” was introduced in March 1999 and quickly clogged Usenet newsgroups, shutting down a significant number of servers. Melissa spread as a worm in Microsoft Word documents. (Note: Wikipedia now maintains a Timeline of Notable Viruses and Worms from the 1980’s to the present.)

Now, as it so happens, 1999 was also the year when it became clear that Microsoft would win the browser war. In 1998, Internet Explorer had only 35% of the market, still a distant second to Netscape, with about 60%. Yet in 1999, Microsoft’s various illegal actions to extend its desktop monopoly to the browser produced a complete reversal: When history finished counting the year, IE had 65% of the market, and Netscape only 30%. IE’s share rose to over 80% the following year. This development is highly significant to the history of the virus/worm outbreak, yet how many of you have an IT department enlightened enough to help you switch from IE back to Firefox (Netscape’s great grandchild)? The browser war extended the growing desktop-OS monoculture to the web browser, which was the window through which a large chunk of malware was to enter the personal computer.

You see, by 1994, a year or so before the World Wide Web became widely known through the Mosaic and Netscape browsers, Microsoft had already achieved dominance of the desktop computer market, having a market share of more than 90%. A year later, Windows 95 nailed the lid on the coffin of its only significant competitor, Apple’s Macintosh operating system, which in that year had only about 9% of corporate desktops. Netscape was the only remaining threat to a true computing monoculture, since as the company had recognized, the web browser was going to become the operating system of the future.

You see, by 1994, a year or so before the World Wide Web became widely known through the Mosaic and Netscape browsers, Microsoft had already achieved dominance of the desktop computer market, having a market share of more than 90%. A year later, Windows 95 nailed the lid on the coffin of its only significant competitor, Apple’s Macintosh operating system, which in that year had only about 9% of corporate desktops. Netscape was the only remaining threat to a true computing monoculture, since as the company had recognized, the web browser was going to become the operating system of the future.

Microsoft’s hardball tactics in beating back Netscape led directly to the insecure computer desktops of the 2000 decade by ensuring that viruses written in “Windows DNA” would be easy to disseminate through Internet Explorer’s Active/X layer. Active/X basically let Microsoft’s legions of Visual Basic semi-developers write garbage programs that could run inside IE, and it became a simple matter to write garbage programs as Trojan Horses to infect a Windows PC. Active/X was a heckuva lot easier to write to than Netscape’s cross-platform plug-in API, which gave IE a huge advantage as developers sought to include Windows OS and MS Office functionality directly in the web browser.

A similar strategy was taking place on the server side of the web, as Microsoft’s web server, Internet Information Server (IIS), had similarly magical tie-in’s to everybody’s favorite desktop OS. Fortunately for the business world, the guys in IT who had the job of managing servers were always a little bit brighter than the ones who managed desktops. They understood the virtues of Unix systems, especially in the realm of security. IT managers weren’t willing to fight for Windows at the server end of the business once IIS was revealed to have so many security holes. As a result, Windows, and IIS, never achieved the dominance of the server market that Microsoft hoped for, although you can be sure that the company hasn’t given up on that quest.

The other major avenue for viruses and worms has been Microsoft Office. As noted, Melissa attacked Microsoft Word documents, but this was a fairly unsophisticated tactic compared with the opportunity presented by Microsoft’s email program, Outlook. Companies with Microsoft Exchange servers in the background and Outlook mail clients up front, which by the late 1990’s had become the dominant culture for email in corporate America, presented irresistable targets for hackers.

Through the web browser, the email program, the word processor, and the web server, the opportunities for cybermischief simply multiplied. Heck, you didn’t even have to be a particularly good programmer to take advantage of all the security holes Microsoft offered, which numbered at least as many as would be needed to fill the Albert Hall (I’m still not sure how many that is).

Through the web browser, the email program, the word processor, and the web server, the opportunities for cybermischief simply multiplied. Heck, you didn’t even have to be a particularly good programmer to take advantage of all the security holes Microsoft offered, which numbered at least as many as would be needed to fill the Albert Hall (I’m still not sure how many that is).

So… the answer to the question of why viruses and worms disproportionately took down Windows servers, networks, and desktops starting in 1999 isn’t that Microsoft was the biggest target… It was because Microsoft Windows was the easiest target.

And the answer to why viruses and worms proliferated so rapidly in the 2000’s and with them the Windows-hacker hordes is simply that hacking Microsoft Windows became a rite of passage on your way to programmer immortality. Why try to attack the really difficult targets in the Unix world, which had already erected mature defenses by the time the Web arrived, when you could wreak havoc for a day or a week by letting your creation loose at another clueless Microsoft-Windows-dominated company? Once everyone was using both Windows and IE, spreading malware became child’s play. You could just put your code in a web page! IE would happily swallow the goodie, and once inside, the host was defenseless.

Which leads me to the next question whose answer has been obscured in myth: Exactly why was the host defenseless? That is, why couldn’t Windows fight off viruses and worms that it encountered? It doesn’t take a physician to know the answer to that one, folks. When you encounter an organism in nature that keeps getting sick when others don’t, it’s a pretty good bet that there’s something wrong with its immune system.

The trusting computer

It’s not commonly known or understood outside of the computer security field that Windows represents a kind of security model called “trusted computing.” Although you’d think this model would have been thoroughly discredited by our collective experience with it over the last decade, it’s a model that Microsoft and its allies still believe in… and still plan to include in their future products such as Windows Vista. Trusted computing has a meaning that’s shifted over the years, but as embodied by Microsoft Windows variants since the beginning of the species, it means that the operating system trusts the software that gets installed on it by default, rather than being suspicious of unknown software by default.

That description is admittedly a simplification, but this debate needs to be simplified so people can understand the difference between Windows and the competition (to the extent that Windows has competition, I’m talking about Mac OS X and Linux). The difference, which clearly explains why Windows is unable to defend itself from attack by viruses and worms, stems from the way Windows handles user accounts, compared with the way Unix-like systems, such as Linux and Mac OS X, handle them. Once you understand this, I think it will be obvious why the virus plague has so lopsidedly affected Windows systems, and it will dispel another of the myths that have been spread around to explain it.

Windows has always been a single-user system, and to do anything meaningful in configuring Windows, you had to be set up as an administrator for the system. If you’ve ever worked at a company that tried to prevent its users from being administrators of their desktop PC’s, you already know how impossible it is. You might as well ask employees to voluntarily replace their personal computer with a dumb terminal. [Update 8/7/06: I think some readers rolled their eyes at this characterization (I saw you!). You must be one of the folks stuck at a company that has more power over its employees than the ones I've worked for in the last 20-odd years. Lucky you! I don't have data on whose experience is more common, but naturally I suspect it's not yours. No matter... this is certainly true for home users ....] And home users are always administrators by default… besides, there’s nothing in the setup of a Windows PC at home that would clearly inform the owner that they had an alternative to setting up their user accounts. (Update 8/7/06: Note to Microsoft fans who take umbrage at this characterization of their favorite operating system: Here’s Microsoft’s own explanation of the User Accounts options in Windows XP Professional.)

The Unix difference: “Don’t trust anyone!”

On Unix systems, which have always been multiuser systems, the system permissions of a Windows administrator are virtually the same as those granted to the “superuser,” or “root” user. In the Unix world, ordinary users grow up living in awe of the person who has root access to the system, since it’s typically only one or two system administrators. Root users can do anything, just as a Windows administrator can.

But here’s the huge difference: A root user can give administrator access to other users, granting them privileges that let them do the things a Windows administrator normally needs to do—system administration, configuration, software installing and testing, etc—but without giving them all the keys to the kingdom. A Unix user with administrator access can’t overwrite most of the key files that hackers like to fool with—passwords, system-level files that maintain the OS, files that establish trusted relationships with other computers in the network, and so on.

Windows lacks this intermediate-level administrator account, as well as other finer-grained account types, primarily because Windows has always been designed as a single-user system. As a result, software that a Windows user installs is typically running with privileges equivalent to those of a Unix superuser, so it can do anything it wants on their system. A virus or worm that infects a Unix system, on the other hand, can only do damage to that user’s files and to the settings they have access to as a Unix administrator. It can’t touch the system files or the sensitive files that would help a virus replicate itself across the network.

Windows lacks this intermediate-level administrator account, as well as other finer-grained account types, primarily because Windows has always been designed as a single-user system. As a result, software that a Windows user installs is typically running with privileges equivalent to those of a Unix superuser, so it can do anything it wants on their system. A virus or worm that infects a Unix system, on the other hand, can only do damage to that user’s files and to the settings they have access to as a Unix administrator. It can’t touch the system files or the sensitive files that would help a virus replicate itself across the network.

This crucial difference is one of the main ways in which Mac OS X and Linux are inherently more secure than Windows is. On Mac OS X, the root user isn’t even activated by default. Therefore, there’s absolutely no chance that a hacker could log in as root: The root user exists only as a background-system entity until a Mac user deliberately instantiates her, and very few people ever do. I don’t think this is the case on Linux or other Unix OS’s, but it’s one of the things that makes Mac OS X one of the most secure operating systems available today.

There are many other mistakes Microsoft has made in designing its insecure operating system—things it could have learned from the Unix experience if it had wanted to. But this one is the doozy that all by itself puts to rest the notion that Microsoft Windows has been attacked more because people don’t like Microsoft, or because it’s the biggest target, or all the other excuses that have been promulgated.

The security awareness class

In response to the cybersecurity crisis, one of the steps our Nation’s IT cowards leaders have taken across the country is to purchase and customize computer security “training.” Such training is now mandatory in the Federal Government and is widely employed in the private sector. I have been forced to endure it for three years now, and I’ve had to pass a quiz at the end for the last two. As a Macintosh user, I naturally find the training offensive, because so much of it is irrelevant to me. It’s also offensive because it is the byproduct of decisions my organization’s IT management has made over the years that in my view are patently absurd. If the decisions had been mine, I would never have allowed my company to become completely dependent on the technological leadership of a single company, especially not one whose product was so difficult to maintain.

It’s a truism to me, and has been for several years now, that Windows computers should simply not be allowed to connect to the Internet. They are too hard to keep secure. Despite the millions that have been spent at my organization alone, does anybody actually believe that our Windows monoculture is free from worry about another worm- or virus-induced network meltdown? Of course not. And why not? Why, it’s because these same IT cowards leaders think such meltdowns are inevitable.

The inevitability of this century’s computer virus outbreaks is one of the implicit myths about their origin:

“Why switch to another operating system, since all operating systems are equally vulnerable? As soon as the alternative OS becomes dominant, viruses geared to that OS will simply return, and we’ll have to fight all over again in an unknown environment.”

My hope is that if you’ve been following my argument thus far, you now realize that this type of attitude is baseless, and simply an excuse to maintain the status quo.

Indeed, the same IT cowards leaders who actually believe this are feeding Microsoft propaganda about computer security to their frightened and techno-ignorant employees through “security awareness” courses such as this. Keep in mind that, as some of the notions point out, companies attempting to train their employees in computer security are doing so not only for their office PC, but for their home PC as well. The rise of telecommuting, another social upheaval caused by the Internet’s easy availability, means that the two are often the same nowadays. So the lessons American workers are learning are true only if they have Windows computers at home, and only if Windows computers are an inevitable and immutable technology in the corporate landscape, like desks and chairs.

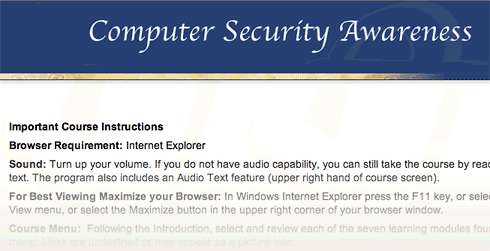

Here are some of the things I learned from my organization’s “Computer Security Awareness” class:

- Always use Internet Explorer when browsing the web.

How many times must employees beg their companies to use Firefox, merely because it’s faster and has better features, before they will listen? In the meantime, to ensure that as many viruses and worms can enter the organization as possible, so that the expensive antivirus software we’ve purchased has something to do, IT management makes sure that as many people continue using IE as possible. I’m being facetious here. The reason they do this is that it’s what the training vendor told them to say, and today’s Federal IT managers always do as instructed by their contractors.While you can find data on the web to support the view that IE is at least as secure as Firefox, common sense should guide your decisionmaking here rather than the questionable advice of dueling experts. The presence of Active/X in IE, all by itself, should be enough to make anyone in charge of an organization’s security jump up and down to keep IE from being the default browser. And that’s not even usually listed as a vulnerability, because it’s no longer “new”.

The “shootouts” that you read now and then pertain to new vulnerabilities that are found, and to the tally of vulnerabilities a given browser maker has “fixed”… not to inherent architectural vulnerabilities like Active/X and JScript (Microsoft’s proprietary extension to JavaScript).

The “shootouts” that you read now and then pertain to new vulnerabilities that are found, and to the tally of vulnerabilities a given browser maker has “fixed”… not to inherent architectural vulnerabilities like Active/X and JScript (Microsoft’s proprietary extension to JavaScript). - Use Windows computers at home.

The belief among IT management in recent years is that if we can get everyone to use the same desktop “image” at work and at home, we can control the configuration and everything will be better. Um, no. Mac users don’t have any fear of these strange Windows file types, and organizations that encourage users to switch to Mac OS X or to Linux, instead of discouraging such switching, immediately improve their security posture. For example, here’s some recent advice from a security expert at Sophos:

“It seems likely that Macs will continue to be the safer place for computer users for some time to come.”

And from a top expert at Symantec comes this recent news:

Simply put, at the time of writing this article, there are no file-infecting viruses that can infect Mac OS X… From the 30,000 foot viewpoint of the current security landscape, … Mac OS X security threats are almost completely lost in the shadows cast by the rocky security mountains of other platforms.

- All computers on the Internet can be infected within 30 minutes if not protected.

No… of all currently available operating systems, this is true only of Microsoft Windows. Mac OS X is an example of a Unix system that’s been designed to use the best security features of the Unix platform by default, and no user action or configuration is required to ensure this.

No… of all currently available operating systems, this is true only of Microsoft Windows. Mac OS X is an example of a Unix system that’s been designed to use the best security features of the Unix platform by default, and no user action or configuration is required to ensure this.

Here’s one of the URL’s (from the SANS Institute) that the course provided, which actually makes pretty clear that Windows systems are the most insecure computers you can give your employees today: Computer Survival History. - Spyware is a problem for all computers.

I imagine that spyware is the most crippling day-to-day aspect of using Windows. My son insisted on trying Virtual PC a couple of years ago, and on his own, his virtual Windows XP was completely unusable because of malware of various kinds within about 20 minutes. He was using Internet Explorer, of course, because that’s what he had on his computer. I installed Firefox for him, and his web surfing in Windows has been much smoother since then. He still has to run antivirus and antiadware software to keep the place “clean,” but needless to say, he has never asked to use IE again. This experience alone demonstrated what I had already read to be true: The web is not a safe place in the 21st century if you’re using Windows. This is one of the primary reasons I use Mac OS X: In all the 5 years I’ve used Mac OS X, I have never once encountered adware. And that has absolutely nothing to do with what websites I surf, or don’t surf, on the web. (And that’s all I’m going to say about it!)

day-to-day aspect of using Windows. My son insisted on trying Virtual PC a couple of years ago, and on his own, his virtual Windows XP was completely unusable because of malware of various kinds within about 20 minutes. He was using Internet Explorer, of course, because that’s what he had on his computer. I installed Firefox for him, and his web surfing in Windows has been much smoother since then. He still has to run antivirus and antiadware software to keep the place “clean,” but needless to say, he has never asked to use IE again. This experience alone demonstrated what I had already read to be true: The web is not a safe place in the 21st century if you’re using Windows. This is one of the primary reasons I use Mac OS X: In all the 5 years I’ve used Mac OS X, I have never once encountered adware. And that has absolutely nothing to do with what websites I surf, or don’t surf, on the web. (And that’s all I’m going to say about it!) - Viruses are a threat to all home computers.

What I said previously about adware, ditto for computer viruses. To this day, there is not a single virus that has successfully infected a Mac OS X machine. (The one you heard about earlier this year was a worm, not a virus, and it only affected a handful of Macs, doing very little damage in any case.) As even Apple will warn you, that doesn’t mean it’s impossible and will never happen. However, it does mean that if Macs rise up and take over the world, amateur virus writers will all have to retire, and you’ll cut the supply line of new virus hackers to the bone. Without Windows to hack, it simply won’t be fun anymore. No quick kills. No instant wins. Creating a successful virus for Mac OS X will take years, not days. Human nature being what it is, I just know there aren’t many hackers who would have the patience for that.

ditto for computer viruses. To this day, there is not a single virus that has successfully infected a Mac OS X machine. (The one you heard about earlier this year was a worm, not a virus, and it only affected a handful of Macs, doing very little damage in any case.) As even Apple will warn you, that doesn’t mean it’s impossible and will never happen. However, it does mean that if Macs rise up and take over the world, amateur virus writers will all have to retire, and you’ll cut the supply line of new virus hackers to the bone. Without Windows to hack, it simply won’t be fun anymore. No quick kills. No instant wins. Creating a successful virus for Mac OS X will take years, not days. Human nature being what it is, I just know there aren’t many hackers who would have the patience for that.

A huge side benefit for Mac users in not having to worry about viruses and worms is that you don’t have to run CPU-sucking antivirus software constantly. Scheduling it to run once a week wouldn’t be a bad idea, but you can do that when you’re sleeping and not have to suffer the annoying slowdowns that are a fact of PC users’ lives every time those antivirus hordes sally forth to fight the evil intruders. Or… you could disconnect your Windows PC from the Internet, and then you could turn that antivirus/antispyware thingy off for good.

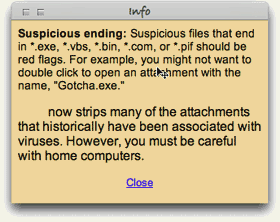

Malicious email attachments are a threat to all.

Malicious email attachments are a threat to all.

**Y A W N** Can we go home now?

Sometimes, I open evil Windows attachments just for the fun of it… to show that I can do so with impunity. Then I send them on to the Help Desk to study.:-) (Just kidding.)

Change resisters in charge

Other than Microsoft, why would anyone with a degree in computer science or otherwise holding the keys to a company’s IT resources want to promulgate such tales and ignore the truth behind the virus plague? That’s a simple one: They fear change.

To admit that Windows is fundamentally flawed and needs to be replaced or phased out in an organization is to face the gargantuan task of transitioning a company’s user base from one OS to another. In most companies, this has never been done, except to exorcise the stubborn Mac population. Although its operating system is to blame for the millions of dollars a company typically has had to spend in the name of IT security over the last 5 years, Microsoft represents a big security blanket for the IT managers and executives who must make that decision. Windows means the status quo… it means “business as usual”… it means understood support contracts and costs. All of these things are comforting to the typical IT exec, who would rather spend huge amounts of his organization’s money and endure sleepless nights worrying about the next virus outbreak than to seriously investigate the alternatives.

Managers like this, who have a vested interest in protecting Microsoft’s monopoly, are the main source of the Windows security myths, and it’s a very expensive National embarrassment. The IT organization is simply no place for people who resist change, because change is the very essence of IT. And yet, the very nature of IT operations management has ensured that change-resisters predominate.

Managers like this, who have a vested interest in protecting Microsoft’s monopoly, are the main source of the Windows security myths, and it’s a very expensive National embarrassment. The IT organization is simply no place for people who resist change, because change is the very essence of IT. And yet, the very nature of IT operations management has ensured that change-resisters predominate.

Note that I said IT operations. As a subject for a future article, I would very much like to elaborate on my increasingly firm belief that IT management should never be handed to the IT segment that’s responsible for operations—for “keeping the trains running.” Operations is an activity that likes routines, well defined processes, and known components. People who like operations work have a fondness for standard procedures. They like to know exactly which steps to take in a given situation, and they prefer that those steps be written down and well-thumbed.

By contrast, the developer side of the IT organization is where new ideas originate, where change is welcomed, where innovation occurs. Both sides of the operation are needed, but all too often the purse strings and decisionmaking reside with the operations group, which is always going to resist the new ideas generated by the other guys. In this particular situation, solutions can only come from the developer mindset, and organizations need to learn how to let the developer’s voice be heard above the fearful, warning voices of Operations.

Custer’s last stand… again

So please, Mr. or Ms. CIO, no more silly security training that teaches me how to [try to] keep secure an operating system I don’t use, one that I don’t want to use, and one that I wish to hell my organization wouldn’t use. Please don’t waste any more precious IT resources spreading myths about computer security to my fellow staffers, all the while ignoring every piece of advice you receive on how to make fundamental improvements to our network and desktop security, just because the advice contradicts what you “already know.”

It really is true that switching from Windows to a Unix-based OS will make our computers and network more secure. I recommend switching to Mac OS X only because it’s got the best designed, most usable interface to the complex and powerful computing platform that lies beneath its attractive surface. Hopefully, Linux variants like Ubuntu will continue to thrive and provide Apple a run for its money. The world would be a much safer place if the cowards leaders who make decisions about our computing desktop would wake up, get their heads out of the sand, smell the roses, and see Microsoft Windows for what it is: The worst thing to happen to computing since… well, … since ever!

Before my recommendation is distorted beyond recognition, let me make clear that I don’t advocate ripping out all the Windows desktops in your company and replacing them with Macs. Although that’s an end-point that here, today seems like a worthy goal, it would be too disruptive to force users to switch, and you’d just end up with the kind of resentment that the Macintosh purges left behind as the 1990’s ended. Instead, I’ve always recommended a sane, transitional approach, such as this one from my November 2002 paper on the subject (note that names have been changed to protect the guilty):

Before my recommendation is distorted beyond recognition, let me make clear that I don’t advocate ripping out all the Windows desktops in your company and replacing them with Macs. Although that’s an end-point that here, today seems like a worthy goal, it would be too disruptive to force users to switch, and you’d just end up with the kind of resentment that the Macintosh purges left behind as the 1990’s ended. Instead, I’ve always recommended a sane, transitional approach, such as this one from my November 2002 paper on the subject (note that names have been changed to protect the guilty):

Allow employees to choose a Macintosh for desktop computing at NNN. This option is particularly important for employees who come to NNN from an environment where Macintoshes are currently supported, as they typically are in academia. In an ideal environment, DITS would offer Macintoshes (I would recommend the flat-panel iMacs) as one of the options for desktop support at NNN. These users can perform all necessary functions for working at NNN without a Windows PC.

This approach simply opens the door to allow employees who want to use Macs to do so without feeling like pariah or second-class citizens.